I have built video analysis pipelines that process thousands of uploads per day, routing each file through multiple ML models for content moderation, face recognition, transcription, and object detection. The architecture I keep returning to uses SageMaker Pipelines as the orchestration backbone, with open-source models deployed across Processing Jobs and Batch Transform steps. This approach gives you full control over model versions, GPU instance selection, and inference logic without per-API-call pricing from managed AI services. The tradeoff is real: you own every container, every model artifact, and every failure mode. This article is the architecture reference for building that pipeline. I cover model selection for each analysis domain, the SageMaker Pipeline DAG design, GPU instance sizing, and the operational patterns that keep it running at scale. If you need a deeper understanding of how SageMaker Pipelines work under the hood, start with SageMaker Pipelines: An Architecture Deep-Dive.

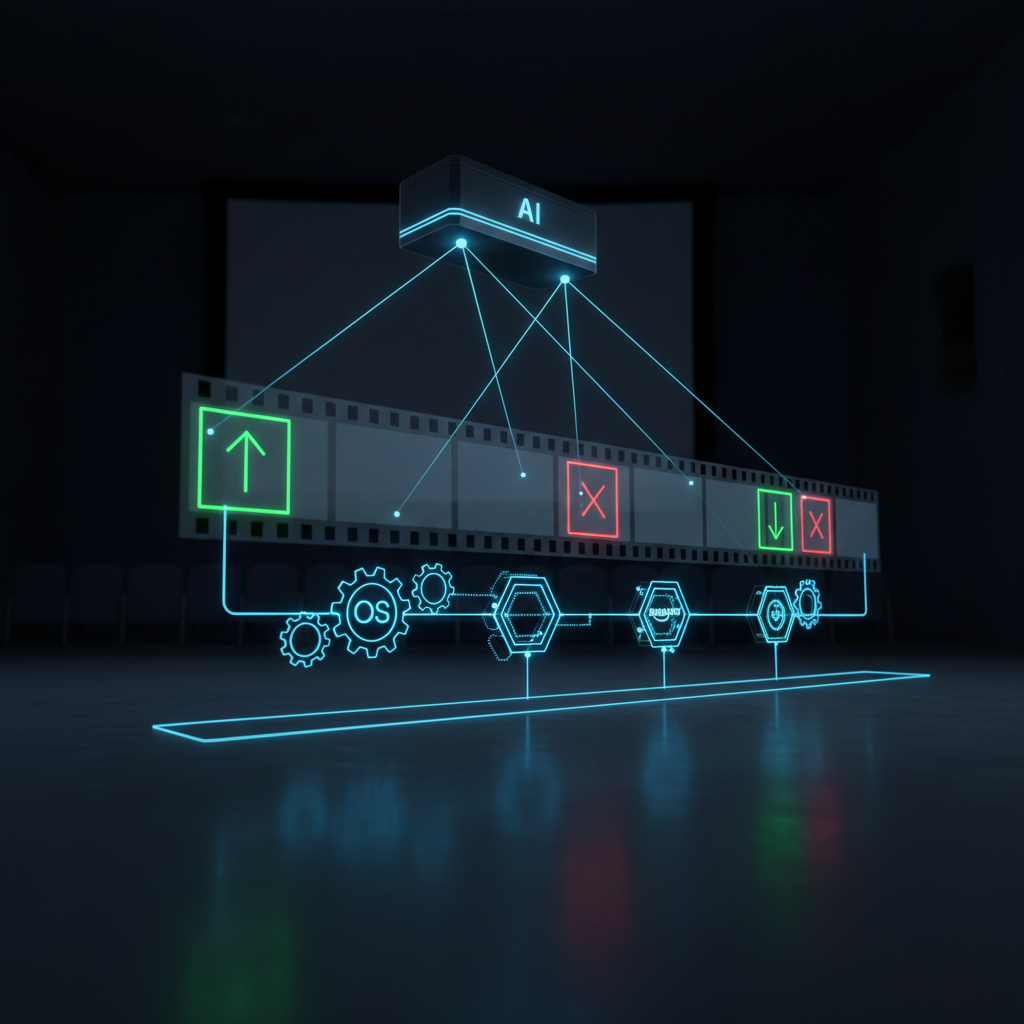

Pipeline Architecture Overview

The pipeline responds to a video landing in S3 and produces a structured metadata package alongside it. Every step runs on SageMaker-managed compute that spins up for the job and terminates when finished. No persistent endpoints. No idle GPU costs. The total cost per video depends on the instance types you select and how long each model takes to process the frames and audio.

Event-Driven Trigger Design

S3 event notifications feed into Amazon EventBridge, which routes the event to a Lambda function. That Lambda function calls the SageMaker Pipelines StartPipelineExecution API with the S3 key as a pipeline parameter. This three-hop trigger pattern (S3 to EventBridge to Lambda to Pipelines) exists because SageMaker Pipelines is not a native EventBridge target. You need the Lambda layer to call the StartPipelineExecution API and construct the parameter payload.

The Lambda function itself is minimal. It extracts the bucket and key from the EventBridge event payload and passes them as ParameterString values:

import boto3

import json

sagemaker = boto3.client("sagemaker")

def handler(event, context):

detail = event["detail"]

bucket = detail["bucket"]["name"]

key = detail["object"]["key"]

sagemaker.start_pipeline_execution(

PipelineName="video-moderation-pipeline",

PipelineParameters=[

{"Name": "InputBucket", "Value": bucket},

{"Name": "InputKey", "Value": key},

],

)

Configure the EventBridge rule to match only video file uploads. Filter on the S3 key suffix to avoid triggering the pipeline when the metadata output lands in the same bucket:

{

"source": ["aws.s3"],

"detail-type": ["Object Created"],

"detail": {

"bucket": { "name": ["my-video-bucket"] },

"object": { "key": [{ "suffix": ".mp4" }] }

}

}

The SageMaker Pipeline DAG

The pipeline definition in the SageMaker Python SDK declares a linear preprocessing step followed by four parallel analysis branches, then a final aggregation step. SageMaker Pipelines resolves the dependency graph automatically based on data references between steps. The parallel branches (moderation, face recognition, transcription, object detection) have no data dependencies on each other, so the execution engine schedules them concurrently.

Each analysis branch runs as a separate SageMaker Processing Job. Processing Jobs give you full control over the container, the instance type, and the input/output data channels. Unlike Batch Transform (which expects a model artifact and a fixed input/output contract), Processing Jobs accept arbitrary code and arbitrary data formats. For a multi-model video analysis pipeline, that flexibility is essential.

| Pipeline Step | SageMaker Job Type | Instance Type | Typical Duration (10-min video) |

|---|---|---|---|

| Preprocessing (FFmpeg) | Processing Job | ml.m5.xlarge | 45-90 seconds |

| Content Moderation | Processing Job | ml.g4dn.xlarge | 60-120 seconds |

| Face Recognition | Processing Job | ml.g4dn.xlarge | 90-180 seconds |

| Speech-to-Text | Processing Job | ml.g5.xlarge | 120-240 seconds |

| Object/Scene Detection | Processing Job | ml.g4dn.xlarge | 60-120 seconds |

| Metadata Aggregation | Processing Job | ml.m5.large | 10-20 seconds |

Total wall-clock time for a 10-minute video: approximately 4 to 6 minutes, dominated by the slowest parallel branch (usually transcription). The preprocessing step runs sequentially before the parallel branches, but SageMaker Pipelines handles the fan-out automatically.

Video Preprocessing: Frame and Audio Extraction

Every downstream model needs either frames or audio, not the raw video container. The preprocessing step converts a single video file into two outputs: a directory of JPEG frames and a WAV audio track. FFmpeg handles both extractions in two sequential commands.

Building a Custom Processing Container

The SageMaker Deep Learning Containers do not include FFmpeg. You need a custom container. Build it from the SageMaker PyTorch base image and add FFmpeg via apt-get:

FROM 763104351884.dkr.ecr.us-east-1.amazonaws.com/pytorch-inference:2.1.0-gpu-py310-cu118-ubuntu20.04-sagemaker

RUN apt-get update && apt-get install -y ffmpeg && rm -rf /var/lib/apt/lists/*

COPY preprocess.py /opt/ml/code/preprocess.py

ENV SAGEMAKER_PROGRAM preprocess.py

The preprocessing script reads the video from /opt/ml/processing/input/video/, extracts frames and audio, and writes them to /opt/ml/processing/output/frames/ and /opt/ml/processing/output/audio/. SageMaker Processing Jobs map these local paths to S3 URIs automatically.

import subprocess

import os

import sys

input_dir = "/opt/ml/processing/input/video"

frames_dir = "/opt/ml/processing/output/frames"

audio_dir = "/opt/ml/processing/output/audio"

os.makedirs(frames_dir, exist_ok=True)

os.makedirs(audio_dir, exist_ok=True)

video_file = [f for f in os.listdir(input_dir) if f.endswith(".mp4")][0]

video_path = os.path.join(input_dir, video_file)

# Extract frames at 1 fps

subprocess.run([

"ffmpeg", "-i", video_path,

"-vf", "fps=1",

"-q:v", "2",

os.path.join(frames_dir, "frame_%05d.jpg")

], check=True)

# Extract audio as 16kHz mono WAV (Whisper's expected format)

subprocess.run([

"ffmpeg", "-i", video_path,

"-ar", "16000", "-ac", "1",

os.path.join(audio_dir, "audio.wav")

], check=True)

Frame Sampling Strategies

Extracting every frame from a 30fps video generates 18,000 frames per 10-minute clip. Running every frame through multiple vision models is prohibitively expensive. The sampling rate you choose directly controls cost and accuracy.

| Sampling Rate | Frames per 10-min Video | Use Case | Accuracy Tradeoff |

|---|---|---|---|

| 1 fps | 600 | Content moderation, object detection | Good for most content; may miss single-frame violations |

| 0.5 fps | 300 | Cost-optimized moderation | Adequate for longer scenes; higher miss rate on brief content |

| 2 fps | 1,200 | High-sensitivity moderation | Near-complete scene coverage; doubles processing cost |

| Scene change detection | 50-200 (varies) | Efficiency-optimized | Captures scene transitions; misses gradual changes |

| Key frame only (I-frames) | 30-100 (varies) | Quick scan | Fast but unreliable for moderation |

I use 1 fps as the default for moderation pipelines. It catches most violations (which tend to persist for multiple seconds) while keeping frame counts manageable. For regulatory environments where missing a single frame matters, bump to 2 fps and accept the cost increase. Scene change detection (using FFmpeg's select='gt(scene,0.3)' filter) produces fewer frames but requires tuning the threshold per content type.

Content Moderation with NudeNet and OpenNSFW2

Content moderation is the primary analysis target. Two open-source models dominate this space: NudeNet (v3, classifier and detector variants) and OpenNSFW2 (Yahoo's NSFW classifier). Both run inference on individual frames.

Model Selection and Accuracy

| Model | Type | Architecture | Accuracy (NSFW binary) | GPU Memory | Inference per Frame (T4) | Output |

|---|---|---|---|---|---|---|

| NudeNet v3 Classifier | Binary classifier | EfficientNet | ~93% | ~500 MB | ~8 ms | NSFW probability score |

| NudeNet v3 Detector | Object detector | YOLOv5-based | ~90% (detection mAP) | ~1.2 GB | ~15 ms | Bounding boxes with labels |

| OpenNSFW2 | Binary classifier | Inception v3 | ~93% | ~400 MB | ~6 ms | NSFW probability score |

| CLIP-based classifier | Zero-shot | ViT-L/14 | ~88% (zero-shot) | ~1.8 GB | ~25 ms | Similarity scores to text prompts |

I run both NudeNet Classifier and OpenNSFW2 in the same Processing Job and aggregate their scores. When both models agree that a frame exceeds a 0.85 threshold, the confidence is high. When they disagree, the frame gets flagged for review. This dual-model approach reduces false positives by roughly 40% compared to running either model alone.

The NudeNet Detector provides localization (bounding boxes around specific body regions), which is useful for downstream workflows like automated blurring. OpenNSFW2 produces only a single scalar score per frame. For moderation-only use cases, the classifier outputs are sufficient. For automated censoring workflows, the detector is required.

Deploying Moderation Models on SageMaker

Package both models into a single container. The processing script loads each model at startup, iterates over the extracted frames, and writes per-frame scores to a JSON output file.

from nudenet import NudeClassifier

from opennsfw2 import predict_image

import json

import os

classifier = NudeClassifier()

frames_dir = "/opt/ml/processing/input/frames"

output_dir = "/opt/ml/processing/output/moderation"

os.makedirs(output_dir, exist_ok=True)

results = []

for fname in sorted(os.listdir(frames_dir)):

fpath = os.path.join(frames_dir, fname)

# NudeNet score

nn_result = classifier.classify(fpath)

nn_score = nn_result[fpath]["unsafe"]

# OpenNSFW2 score

nsfw_score = predict_image(fpath)

results.append({

"frame": fname,

"nudenet_score": round(nn_score, 4),

"opennsfw2_score": round(nsfw_score, 4),

"flagged": nn_score > 0.85 or nsfw_score > 0.85,

})

with open(os.path.join(output_dir, "moderation.json"), "w") as f:

json.dump(results, f, indent=2)

The ml.g4dn.xlarge instance (NVIDIA T4, 16 GB GPU memory) handles both models comfortably. At 1 fps extraction, a 10-minute video produces 600 frames. With combined inference at roughly 20 ms per frame, the moderation step completes in under 15 seconds of pure GPU time. The remaining duration is container startup and S3 data transfer.

Face Detection and Recognition with InsightFace

Face recognition serves two purposes in a moderation pipeline: identifying known individuals (celebrities, persons of interest, banned users) and detecting the presence of faces for demographic analysis or consent verification. InsightFace provides both detection and recognition in a single library.

Building a Face Embedding Index

InsightFace uses ArcFace embeddings (512-dimensional vectors) for face recognition. You compare detected face embeddings against a pre-built index of known faces. Store the index as a NumPy array in S3 and load it at the start of each Processing Job.

The recognition workflow per frame:

- Detect faces using InsightFace's RetinaFace detector

- Extract 512-d ArcFace embedding for each detected face

- Compare each embedding against the known faces index using cosine similarity

- Flag matches above a 0.4 cosine similarity threshold (InsightFace's recommended threshold)

import insightface

import numpy as np

import os

import json

# Load the face analysis model

app = insightface.app.FaceAnalysis(

name="buffalo_l",

providers=["CUDAExecutionProvider"]

)

app.prepare(ctx_id=0, det_size=(640, 640))

# Load known faces index from S3 input channel

known_faces = np.load("/opt/ml/processing/input/index/known_faces.npz")

known_embeddings = known_faces["embeddings"] # Shape: (N, 512)

known_labels = known_faces["labels"] # Shape: (N,)

frames_dir = "/opt/ml/processing/input/frames"

output_dir = "/opt/ml/processing/output/faces"

os.makedirs(output_dir, exist_ok=True)

results = []

for fname in sorted(os.listdir(frames_dir)):

fpath = os.path.join(frames_dir, fname)

img = insightface.utils.face_align.read_image(fpath)

faces = app.get(img)

frame_faces = []

for face in faces:

embedding = face.normed_embedding

similarities = np.dot(known_embeddings, embedding)

best_idx = np.argmax(similarities)

best_score = similarities[best_idx]

frame_faces.append({

"bbox": face.bbox.tolist(),

"match": known_labels[best_idx] if best_score > 0.4 else None,

"similarity": round(float(best_score), 4),

})

results.append({"frame": fname, "faces": frame_faces})

with open(os.path.join(output_dir, "faces.json"), "w") as f:

json.dump(results, f, indent=2)

Instance Selection and Throughput

InsightFace's buffalo_l model (the largest pre-trained bundle) includes RetinaFace for detection and ArcFace for recognition. On a T4 GPU, it processes roughly 30 faces per second including both detection and embedding extraction. For frames with multiple faces, per-frame latency scales linearly with face count.

| Instance Type | GPU | GPU Memory | InsightFace Throughput | Cost/Hour |

|---|---|---|---|---|

| ml.g4dn.xlarge | NVIDIA T4 | 16 GB | ~30 faces/sec | $0.736 |

| ml.g5.xlarge | NVIDIA A10G | 24 GB | ~55 faces/sec | $1.408 |

| ml.g5.2xlarge | NVIDIA A10G | 24 GB | ~55 faces/sec (CPU-bound at this point) | $1.515 |

| ml.p3.2xlarge | NVIDIA V100 | 16 GB | ~65 faces/sec | $3.825 |

The g4dn.xlarge is the right choice here unless your videos routinely contain dozens of faces per frame (live event footage, crowd scenes). The V100 on the p3 delivers roughly double the throughput but costs five times as much per hour. For a typical 10-minute video with 600 frames and 1 to 3 faces per frame, the g4dn processes the entire batch in under 60 seconds.

Speech-to-Text with Whisper

Audio transcription catches content that visual analysis misses entirely: hate speech, explicit verbal content, copyrighted music. OpenAI's Whisper models remain the strongest open-source option for general-purpose speech-to-text.

Model Size vs. Latency Tradeoffs

Whisper ships in five sizes. The tradeoff between accuracy and processing time is steep at the larger end.

| Model | Parameters | GPU Memory | Real-Time Factor (T4) | Real-Time Factor (A10G) | Word Error Rate (English) |

|---|---|---|---|---|---|

| tiny | 39M | ~1 GB | 0.03x | 0.02x | ~10% |

| base | 74M | ~1 GB | 0.05x | 0.03x | ~7% |

| small | 244M | ~2 GB | 0.15x | 0.08x | ~5% |

| medium | 769M | ~5 GB | 0.4x | 0.2x | ~4% |

| large-v3 | 1.55B | ~10 GB | 1.0x | 0.5x | ~3% |

Real-time factor means the ratio of processing time to audio duration. A factor of 0.5x means a 10-minute audio file takes 5 minutes to transcribe. On a T4 GPU, Whisper large-v3 runs at roughly 1:1 with audio duration. On an A10G (ml.g5 instances), it runs at roughly 2x real-time speed.

For moderation pipelines, I recommend large-v3 on an ml.g5.xlarge. The 3% word error rate matters when you are scanning transcripts for specific prohibited terms. The medium model drops to 4% WER, which sounds close until you realize that a 1% difference across a 10-minute transcript (roughly 1,500 words) means 15 additional misrecognized words. Some of those misrecognitions will be false negatives on flagged terms.

Async Inference for Long-Form Audio

For videos longer than 30 minutes, the transcription step becomes the bottleneck in the pipeline. Two architectural options exist:

Option 1: Chunked processing within a single Processing Job. Split the audio into 30-second segments using FFmpeg, transcribe each segment sequentially on the GPU, and concatenate the results. This approach keeps everything in one SageMaker step but requires careful handling of word boundaries at chunk edges. Whisper handles this natively with its --condition_on_previous_text flag.

Option 2: SageMaker Async Inference Endpoint. Deploy Whisper on a persistent async endpoint with auto-scaling. The Processing Job sends the audio to the endpoint and polls for results. This approach adds endpoint management overhead but enables you to share a single Whisper deployment across multiple pipelines. I use this pattern only when transcription volume justifies a persistent endpoint (roughly 50+ videos per hour).

For most moderation pipelines processing fewer than 50 videos per hour, Option 1 (single Processing Job with Faster-Whisper) is simpler and cheaper. You pay only for the GPU time during transcription, with no idle endpoint costs.

Object and Scene Detection with YOLO and CLIP

Object detection identifies specific items in frames (weapons, drugs, vehicles, animals). Scene classification categorizes the overall setting (indoor, outdoor, beach, office, protest). Together they provide context that pure moderation models miss. A frame might score low on nudity but contain weapons or depict a protest scene that requires different moderation rules.

YOLO for Object Detection

YOLOv8 (Ultralytics) is the production standard for real-time object detection. It ships in five sizes, each trading accuracy for speed.

| Model | Parameters | mAP@50 (COCO) | Inference per Frame (T4) | GPU Memory |

|---|---|---|---|---|

| YOLOv8n (nano) | 3.2M | 37.3 | ~3 ms | ~200 MB |

| YOLOv8s (small) | 11.2M | 44.9 | ~5 ms | ~400 MB |

| YOLOv8m (medium) | 25.9M | 50.2 | ~10 ms | ~800 MB |

| YOLOv8l (large) | 43.7M | 52.9 | ~15 ms | ~1.2 GB |

| YOLOv8x (extra-large) | 68.2M | 53.9 | ~22 ms | ~1.8 GB |

I deploy YOLOv8m as the default. The jump from medium to large gains only 2.7 mAP points while adding 50% more inference time. The nano model is tempting for cost optimization, but its 37.3 mAP means it misses too many objects to be reliable for moderation.

YOLOv8 detects 80 COCO object classes out of the box. For moderation-specific objects (weapons, drug paraphernalia), you need a fine-tuned model. Train a custom YOLOv8m on a moderation-specific dataset and store the weights in S3. The Processing Job loads either the standard COCO weights or the custom weights based on a pipeline parameter.

CLIP for Scene Classification

CLIP (Contrastive Language-Image Pre-Training) from OpenAI performs zero-shot image classification. You provide text descriptions of scenes, and CLIP returns similarity scores between each frame and each description. No training required. No fixed label set.

This flexibility makes CLIP uniquely useful for moderation. You define moderation categories as text prompts and adjust them without retraining:

import clip

import torch

from PIL import Image

device = "cuda"

model, preprocess = clip.load("ViT-L/14", device=device)

scene_prompts = [

"a violent scene",

"a protest or demonstration",

"a person holding a weapon",

"drug use or drug paraphernalia",

"a safe indoor scene",

"a nature landscape",

"a sports event",

]

text_tokens = clip.tokenize(scene_prompts).to(device)

def classify_frame(image_path):

image = preprocess(Image.open(image_path)).unsqueeze(0).to(device)

with torch.no_grad():

image_features = model.encode_image(image)

text_features = model.encode_text(text_tokens)

similarities = (image_features @ text_features.T).softmax(dim=-1)

return {

prompt: round(score.item(), 4)

for prompt, score in zip(scene_prompts, similarities[0])

}

CLIP ViT-L/14 requires roughly 1.8 GB of GPU memory and processes frames at approximately 25 ms per frame on a T4. Combined with YOLOv8m (10 ms per frame), both models fit comfortably on a single ml.g4dn.xlarge instance and process 600 frames in under 25 seconds.

Assembling the Metadata Package

The aggregation step collects outputs from all four analysis branches and writes a single structured metadata file alongside the original video in S3. This step runs on a CPU instance (ml.m5.large) because it performs only JSON parsing and S3 writes.

Schema Design

The metadata package uses a flat JSON structure with top-level keys for each analysis domain. Each domain contains both summary-level flags and per-frame detail.

{

"video_key": "uploads/2026/02/25/sample-video.mp4",

"pipeline_execution_id": "abc123",

"processed_at": "2026-02-25T14:30:00Z",

"duration_seconds": 612,

"frames_analyzed": 612,

"moderation": {

"flagged": true,

"max_nudenet_score": 0.92,

"max_opennsfw2_score": 0.88,

"flagged_frames": ["frame_00142.jpg", "frame_00143.jpg"],

"per_frame": [...]

},

"faces": {

"total_faces_detected": 47,

"unique_identities": 3,

"known_matches": [

{"label": "person-of-interest-001", "max_similarity": 0.82}

],

"per_frame": [...]

},

"transcription": {

"text": "Full transcript text here...",

"language": "en",

"flagged_terms": ["term1", "term2"],

"segments": [...]

},

"objects": {

"detected_classes": ["person", "car", "dog"],

"weapon_detected": false,

"scene_classifications": {

"dominant_scene": "a safe indoor scene",

"scene_scores": {...}

},

"per_frame": [...]

}

}

Writing Results to S3

The aggregation script reads the four JSON output files from the analysis branches (delivered via SageMaker Processing Job output channels), merges them into the schema above, and writes the result to S3. Place the metadata file adjacent to the video with a .moderation.json suffix:

s3://my-video-bucket/uploads/2026/02/25/sample-video.mp4

s3://my-video-bucket/uploads/2026/02/25/sample-video.moderation.json

This co-location pattern makes it trivial to look up moderation results for any video. A downstream application queries S3 for the .moderation.json file and reads the summary flags without parsing per-frame data.

For high-throughput deployments, write the metadata to DynamoDB instead of (or in addition to) S3. A DynamoDB table keyed on the video S3 key enables sub-millisecond lookups and supports GSIs for querying flagged videos, specific detected objects, or known face matches. The aggregation step can write to DynamoDB using boto3 within the Processing Job container. See AWS S3 Cost Optimization: The Complete Savings Playbook for patterns on managing the S3 storage tier for video archives.

Operational Considerations

Running this pipeline in production exposes failure modes and cost dynamics that the development environment hides. The following sections cover the operational patterns I have learned from running similar pipelines at scale.

Cost Model

SageMaker Pipelines itself has no orchestration charge. You pay for the compute each step consumes. The cost per video depends on the instance types, the video duration, and the frame sampling rate.

| Cost Component | Instance | Duration (10-min video) | Cost per Execution |

|---|---|---|---|

| Preprocessing | ml.m5.xlarge ($0.23/hr) | 90 seconds | $0.006 |

| Content Moderation | ml.g4dn.xlarge ($0.736/hr) | 120 seconds | $0.025 |

| Face Recognition | ml.g4dn.xlarge ($0.736/hr) | 120 seconds | $0.025 |

| Transcription (Faster-Whisper) | ml.g5.xlarge ($1.408/hr) | 150 seconds | $0.059 |

| Object/Scene Detection | ml.g4dn.xlarge ($0.736/hr) | 90 seconds | $0.018 |

| Metadata Aggregation | ml.m5.large ($0.115/hr) | 15 seconds | $0.001 |

| Total per video | ~$0.13 |

At 1,000 videos per day, the pipeline costs approximately $130/day or $3,900/month. At 10,000 videos per day, it reaches $1,300/day or $39,000/month. These numbers assume cold-start Processing Jobs for every execution (no persistent endpoints, no warm pools).

For detailed networking configuration when running these Processing Jobs in a VPC (recommended for production), see Best Practices for Networking in AWS SageMaker.

Failure Modes and Recovery

Each analysis branch can fail independently. SageMaker Pipelines marks the failed step and halts downstream steps that depend on it. The pipeline execution enters a Failed state, but the other parallel branches may have completed successfully.

Common failure modes I have encountered:

GPU out-of-memory. Whisper large-v3 on a g4dn.xlarge leaves only 6 GB of GPU memory headroom. If the audio file is unusually long (60+ minutes) and Faster-Whisper attempts to process a large chunk, the GPU runs out of memory. Fix: set a maximum chunk duration of 30 seconds in the Faster-Whisper configuration, or upgrade to ml.g5.xlarge.

Container image pull timeout. Custom containers with large model weights baked into the image can exceed the 20-minute container pull timeout on slower instance types. Fix: store model weights in S3 and download them at container startup instead of baking them into the image. This reduces the container image to under 2 GB.

S3 throttling on high-throughput frame reads. When 600 frames land in a single S3 prefix and four Processing Jobs attempt to read them concurrently, you may hit the 5,500 GET requests per second per prefix limit. This is rare for a single video but becomes real at 100+ concurrent pipeline executions. Fix: partition frames into per-execution prefixes using the pipeline execution ID.

Transient model loading failures. InsightFace and CLIP download model weights from external URLs on first use. In a VPC without internet access, this fails silently. Fix: pre-download all model weights, package them in S3, and configure the Processing Job to mount the weights as an input channel.

Scaling Patterns

Three scaling dimensions affect pipeline throughput:

Concurrent pipeline executions. SageMaker Pipelines supports up to 10,000 concurrent executions per account (soft limit, increase via Service Quotas). Each execution runs independently with its own set of Processing Jobs.

Instance availability. GPU instances (especially g5 and p3 families) have limited availability in some regions. The pipeline fails at the step level if SageMaker cannot provision the requested instance within the timeout period. Mitigate this by specifying instance type fallback lists in your pipeline parameters and using a ConditionStep to select available instance types.

S3 throughput. Each pipeline execution reads and writes to S3 multiple times (video input, frames, audio, per-step outputs, final metadata). At 1,000+ concurrent executions, S3 request rates become the bottleneck. Use distinct S3 prefixes per execution and enable S3 Transfer Acceleration for cross-region deployments.

For pipelines processing more than 5,000 videos per day, I recommend transitioning the transcription step to a persistent SageMaker Async Inference Endpoint with auto-scaling. The transcription step is consistently the most expensive and longest-running branch. A persistent endpoint with min/max instance auto-scaling eliminates cold start time and provides more predictable latency. The other three branches (moderation, faces, objects) remain as Processing Jobs because their per-execution GPU time is short enough that cold start overhead stays proportionally small.

I cover SageMaker training pipeline patterns (as opposed to inference pipelines) in Building Large-Scale SageMaker Training Pipelines with Step Functions, which addresses different scaling concerns around distributed training, spot instances, and checkpoint management.

Key Architecture Patterns

Several patterns emerge from building and operating this pipeline across multiple production deployments.

Pattern 1: Dual-Model Consensus for Moderation

Running two independent moderation models and requiring agreement before flagging content reduces false positives without significantly increasing false negatives. The cost of running a second lightweight classifier (OpenNSFW2 adds 6 ms per frame) is negligible compared to the cost of human review for false positives.

Pattern 2: Processing Jobs over Endpoints for Batch Workloads

For event-driven, per-video processing, Processing Jobs are cheaper than persistent endpoints. You pay for GPU time only during active inference. The cold start penalty (3 to 5 minutes) is acceptable when the alternative is paying for idle GPU hours. Switch to endpoints only when throughput exceeds 50 to 100 videos per hour and cold start latency becomes the dominant cost.

Pattern 3: Pre-Downloaded Model Weights

Every open-source model in this pipeline (NudeNet, OpenNSFW2, InsightFace, Whisper, YOLO, CLIP) attempts to download weights from external sources on first initialization. In a VPC-isolated SageMaker environment (which every production deployment should use), these downloads fail. Package all model weights in S3. Mount them as input channels on the Processing Job. Set the library-specific cache directory environment variables (INSIGHTFACE_HOME, CLIP_CACHE_DIR, etc.) to point at the mounted path.

Pattern 4: Parallel Branches with Independent Failure Domains

The four analysis branches share no state and have no data dependencies on each other. If the face recognition branch fails (corrupted index file, GPU OOM), the moderation, transcription, and object detection branches still complete. The aggregation step writes a partial metadata file with the available results and sets a partial: true flag. Downstream applications check this flag and decide whether to proceed with incomplete analysis or trigger a retry of the failed branch.