Five ways to expose a Lambda function over HTTP. At least. AWS keeps adding more. Most teams pick API Gateway on day one and never revisit that decision. Fine. API Gateway handles a lot.

I kept hitting walls, though. The 29-second timeout? Killed my report-generation endpoints. Per-request pricing ate my budget once volume got real. The shared regional throttle turned into a coordination mess across teams. So I landed on CloudFront in front of an Application Load Balancer with Lambda targets. Routing flexibility. CDN edge capabilities. Serverless compute. No per-request pricing tax. No hard timeout ceiling.

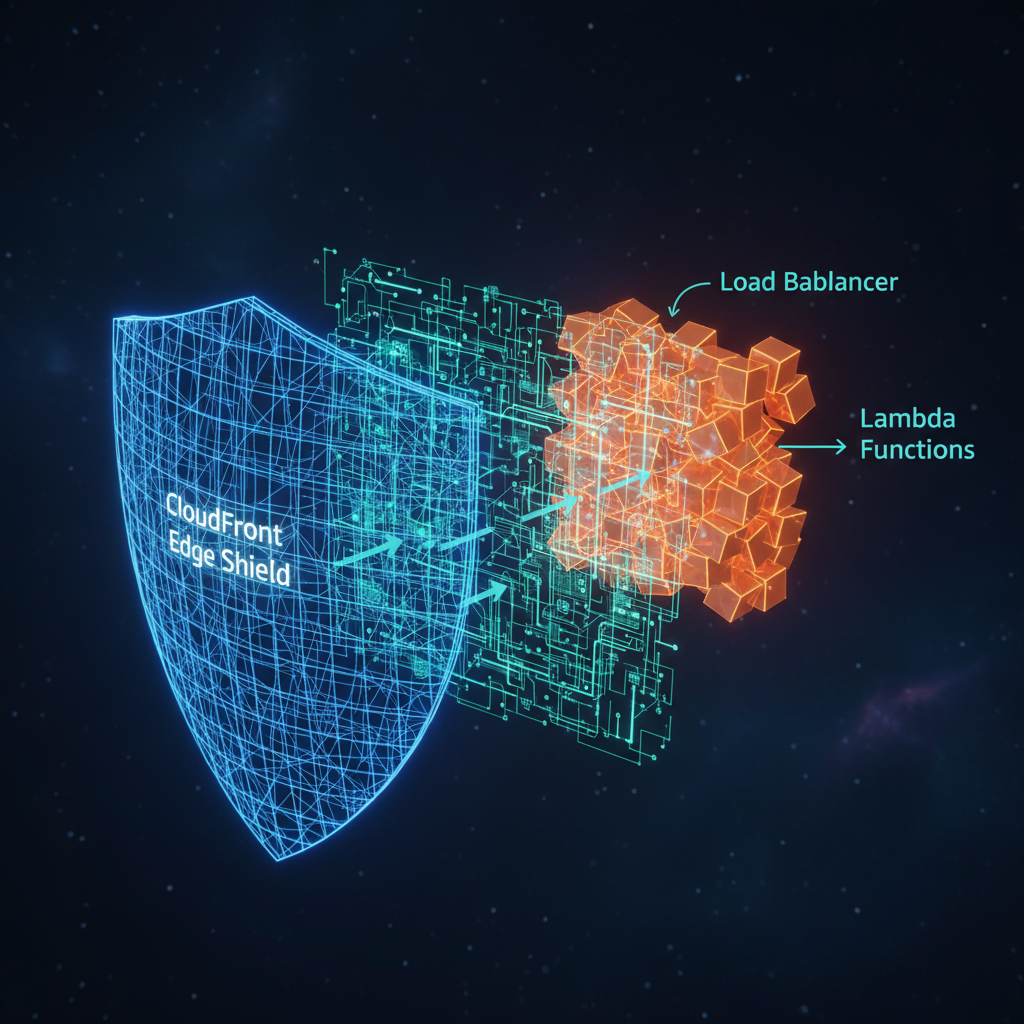

Architecture reference. I'm assuming you already know Lambda, ALB, and CloudFront as individual services. How they work together, why you'd combine them, where the sharp edges hide: that's what this covers.

Why This Pattern Exists

API Gateway is the default front door for Lambda APIs. Auth, throttling, request validation, deployment stages. Good defaults. Three constraints bite at scale.

The 29-second hard timeout on REST APIs (30 seconds on HTTP APIs) can't be increased. Full stop. Report generation, batch processing, large data transformations. Anything that might run longer? Can't serve it through API Gateway. I hit this on a financial reporting system that needed 45 seconds for complex aggregations. No workaround.

Per-request pricing scales linearly. REST API: $3.50 per million requests. HTTP API: $1.00 per million. Hundreds of millions of requests per month and that line item alone dwarfs your Lambda compute cost.

Then there's the 10,000 requests-per-second regional throttle. Shared. Account-level. Across all API Gateway APIs in a region. You can request an increase, sure. Still a shared ceiling. Still forces coordination across teams. I burned two weeks once tracking down intermittent 429 errors. Another team's API was eating the shared quota. Two weeks.

November 2018, AWS announced ALB support for Lambda targets. ALB uses LCU-based pricing: pay for capacity consumed, not individual requests. No hard timeout beyond Lambda's own 15-minute max. No shared regional throttle. Each ALB scales on its own.

What does CloudFront add? Global edge caching (eliminating redundant Lambda invocations). AWS WAF integration. DDoS protection via Shield. TLS termination at the edge. An ALB alone gives you none of that.

For deeper discussion of the individual services, see the Amazon API Gateway: An Architecture Deep-Dive, the AWS Elastic Load Balancing: An Architecture Deep-Dive, and the Amazon CloudFront: An Architecture Deep-Dive.

Signals that should make you evaluate this pattern:

| Signal | Why It Matters |

|---|---|

| Monthly request volume exceeds 30M | Per-request API Gateway pricing begins to dominate your serverless bill |

| Endpoints that may exceed 29 seconds | API Gateway's hard timeout is fixed; ALB has no equivalent limit |

| Multiple independent Lambda functions behind one domain | ALB listener rules route to independent target groups with no shared throttle |

| Need for WAF and DDoS protection | CloudFront provides AWS WAF and Shield Standard at the edge |

| Read-heavy workloads with cacheable responses | CloudFront caching eliminates origin requests entirely for cached content |

| Authentication offloading | ALB natively integrates with Cognito and OIDC providers, removing auth logic from Lambda |

| Hitting API Gateway throttle limits | ALB has no shared regional throttle ceiling |

How ALB Lambda Targets Work

Register a Lambda function as a target in an ALB target group and here's what happens. The ALB invokes your function synchronously via the Lambda Invoke API with the RequestResponse invocation type. Constructs a JSON event from the HTTP request. Calls the function. Waits for the complete response. Translates the JSON return value back into HTTP.

Totally different mental model from EC2 targets. No persistent connections. No connection pooling. No draining. Every request: independent Lambda invocation.

Why does the event format matter? Because ALB sends its own format to your Lambda function. Distinct from both API Gateway event formats. Writing handlers that work across multiple invocation paths means dealing with these differences.

| Field | ALB Event | API Gateway v1 (REST) | API Gateway v2 (HTTP) |

|---|---|---|---|

| Request context | requestContext.elb with target group ARN | requestContext with API ID, stage, identity | requestContext with API ID, route key, HTTP method |

| HTTP method | httpMethod | httpMethod | requestContext.http.method |

| Path | path | path and resource | rawPath |

| Query parameters | queryStringParameters | queryStringParameters | rawQueryString and queryStringParameters |

| Headers | headers | headers | headers (comma-delimited multi-value) |

| Body | body | body | body |

| Base64 encoding | isBase64Encoded | isBase64Encoded | isBase64Encoded |

| Path parameters | Not available | pathParameters | pathParameters |

| Stage variables | Not available | stageVariables | stageVariables |

| Cookies | Via headers only | Via headers | cookies (dedicated array) |

Multi-value headers and query parameters. This one will bite you. By default, ALB sends only the last value when a header or query parameter appears multiple times. Breaks Set-Cookie responses (which require multiple values). Breaks any API using repeated query parameters like ?id=1&id=2. Fix? Enable multi-value headers on the target group. Changes the event format to include multiValueHeaders and multiValueQueryStringParameters. Always do this. Zero downside. Skip it and enjoy a subtle production bug that takes hours to find.

Health checks on Lambda targets. Different cost story than EC2. Every health check is a real Lambda invocation you pay for. Default 35-second interval across three AZs generates roughly 2,200 health check invocations per day per target group. At $0.20 per million requests, that cost is negligible. Cold start risk, though. That's the real concern. A health check hitting a cold Lambda can temporarily mark the target unhealthy.

| Parameter | Default | Recommended for Lambda | Notes |

|---|---|---|---|

| Interval | 35 seconds | 60-300 seconds | Reduces invocation cost and cold start exposure |

| Timeout | 30 seconds | 10 seconds | Lambda health checks should respond fast; a slow response signals a problem |

| Healthy threshold | 5 | 3 | Faster recovery after transient cold start failures |

| Unhealthy threshold | 2 | 3 | Avoids flapping from a single cold start timeout |

| Path | / | /health | Use a dedicated lightweight handler that avoids database calls |

| Success codes | 200 | 200 | Keep it simple |

The Full Request Flow

You need to understand the full request path. User to Lambda and back. Otherwise debugging latency, configuring timeouts, and designing cache strategies is guesswork. I've spent more time than I'd like to admit tracing requests through this stack. CloudWatch logs open in three tabs. Notebook full of scribbled latency numbers.

Each stage:

- DNS resolution. Browser resolves your domain to a CloudFront edge location via Route 53 (or whatever DNS provider you use). Anycast routing picks the nearest edge.

- TLS termination at CloudFront. This is the single largest latency win in the whole architecture. TLS handshake happens within a few milliseconds of the user. Not hundreds of milliseconds away at your ALB's region.

- Cache lookup. CloudFront checks its edge cache. Origin Shield enabled? Also checks the regional cache. Cache hit means the response returns immediately. No ALB contact. No Lambda invocation. This is where cost savings live for read-heavy workloads.

- Origin request to ALB. Cache miss. CloudFront opens (or reuses) a persistent HTTPS connection to the ALB. Forwards the request with original headers plus whatever you've configured in your origin request policy.

- Listener rule evaluation. ALB evaluates rules in priority order. First match wins.

- Lambda invocation. ALB invokes the Lambda in the matching target group. Synchronous. Holds the connection open until Lambda returns or times out.

- Response propagation. Response flows back through ALB to CloudFront. Cache behavior and response headers permit it? CloudFront caches the response, sends it to the user.

| Stage | Typical Latency | Notes |

|---|---|---|

| DNS resolution | 1-50 ms | Cached after first lookup; Route 53 alias records add zero latency |

| TLS handshake (edge) | 5-30 ms | Happens at nearest edge location; TLS 1.3 reduces round trips |

| CloudFront cache check | <1 ms | In-memory lookup at the edge |

| CloudFront to ALB | 1-20 ms | Persistent connections; same-region ALB is lowest latency |

| ALB rule evaluation | <1 ms | Rule matching is in-memory and extremely fast |

| Lambda execution | 5-500+ ms | Dominated by cold start (if any) and function logic |

| Response propagation | 2-30 ms | Return path through ALB and CloudFront to user |

Warm Lambda, same-region ALB, cache miss: total overhead from CloudFront and ALB runs 10-40 ms. Cache hit? Under 5 ms straight from the edge. Your ALB and Lambda locations stop mattering entirely.

CloudFront Configuration for ALB Origins

Lots of settings to get wrong here. Failure modes are subtle.

Origin configuration. HTTPS-only protocol. Keep-alive timeout of 30-60 seconds (connection reuse between CloudFront and ALB). Origin domain points to the ALB's DNS name (the *.region.elb.amazonaws.com hostname). Add a custom origin header (something like X-Origin-Verify with a secret value) that your ALB or Lambda validates. That's what ensures requests only arrive through CloudFront.

Cache behavior design. Where most complexity lives. A typical API mixes cacheable and non-cacheable paths. Design cache behaviors matching URL structure from day one. I learned the hard way. Retrofitting cache policies onto an existing API? Painful.

| Path Pattern | Cache Policy | Origin Request Policy | TTL | Use Case |

|---|---|---|---|---|

/api/catalog/* | Custom: cache on path, Accept header | Forward Host, Authorization excluded | 300s | Product catalog, read-heavy, cacheable |

/api/users/* | CachingDisabled | AllViewerExceptHostHeader | 0 | User-specific data, never cache |

/api/auth/* | CachingDisabled | AllViewerExceptHostHeader | 0 | Authentication endpoints, never cache |

/assets/* | CachingOptimized | None | 86400s | Static assets, long TTL |

Default (*) | CachingDisabled | AllViewerExceptHostHeader | 0 | Catch-all for dynamic content |

WAF placement. Three options: CloudFront, ALB, or both. Put WAF on CloudFront for rate limiting, geo-blocking, bot control. Edge evaluation before traffic hits your region. Only attach WAF to the ALB if you need rules inspecting headers or body content that CloudFront doesn't forward. Both layers? Doubles your WAF cost. Be deliberate about which rules go where. For a deeper look at CloudFront edge security, see the Amazon CloudFront: An Architecture Deep-Dive.

Multi-Function Routing

This is why the architecture earns its complexity. ALB's routing model.

API Gateway can route to multiple Lambda functions. ALB gives you way more flexibility. With API Gateway, all Lambda functions behind a single API share a regional throttle limit. ALB target groups? Independent invocation paths. No shared throttle. order-service takes a traffic spike and user-service keeps running. Unaffected. I watched API Gateway's shared throttle cause cascading failures across completely unrelated services once. That single experience pushed me toward ALB for multi-function workloads.

Path-based routing sends different URL prefixes to different Lambda functions, each in its own target group. Host-based routing means a single ALB serves multiple domains backed by different functions. Pay the ALB fixed cost once. Consolidation adds up fast.

Seven condition types on ALB listener rules. All work with Lambda targets.

| Condition Type | Example | Notes |

|---|---|---|

| host-header | api.example.com | Enables multi-tenant or multi-domain routing on a single ALB |

| path-pattern | /api/users/* | Most common condition; supports wildcards |

| http-header | X-Api-Version: v2 | Route by custom header value; useful for API versioning |

| http-request-method | GET | Route reads vs. writes to different functions |

| query-string | action=export | Route specific query patterns to specialized handlers |

| source-ip | 10.0.0.0/8 | Route internal vs. external traffic differently |

| Combined conditions | Host + Path + Method | Up to 5 conditions per rule for precise routing |

Up to five conditions per rule, AND logic. A single listener supports 100 rules (adjustable via quota request). API Gateway can't touch this routing granularity.

| Routing Capability | ALB | API Gateway REST | API Gateway HTTP |

|---|---|---|---|

| Path-based routing | Yes | Yes | Yes |

| Host-based routing | Yes | Custom domains only | Custom domains only |

| Header-based routing | Yes | No | No |

| Query string routing | Yes | No | No |

| HTTP method routing | Yes | Yes | Yes |

| Source IP routing | Yes | No | No |

| Weighted routing | Yes (weighted target groups) | Canary deployments | No |

| Fixed response | Yes (custom status + body) | Mock integration | No |

| Redirect actions | Yes (301/302) | No | No |

| Multiple conditions per rule | Up to 5 AND conditions | One resource path | One route |

| Independent throttle per route | Yes (separate target groups) | No (shared regional) | No (shared regional) |

| Max rules per endpoint | 100 per listener | Unlimited routes | Unlimited routes |

Cost Analysis

Depends heavily on request volume and cache hit ratio. Raw numbers first, then the factors that shift things.

Each component in the CloudFront + ALB + Lambda path has its own pricing model.

| Component | Pricing Model | Key Metric | Approximate Rate (US East) |

|---|---|---|---|

| CloudFront requests | Per-request | HTTPS requests | $1.00 per 1M requests |

| CloudFront data transfer | Per-GB | Data out to internet | $0.085 per GB (first 10 TB) |

| ALB fixed | Hourly | Running hours | $16.20 per month |

| ALB LCU | Hourly per LCU | Highest of 4 dimensions | $0.008 per LCU-hour |

| Lambda requests | Per-request | Invocations | $0.20 per 1M requests |

| Lambda compute | Per-GB-second | Memory x duration | $0.0000166667 per GB-second |

Total monthly cost comparison across five invocation paths. Assumptions: 128 MB Lambda, 100 ms average duration, 2 KB average request+response size, HTTPS, US East, no free tier, no CloudFront caching (worst case for CloudFront paths).

| Monthly Requests | API GW REST | API GW HTTP | CF + ALB | Function URL | CF + Function URL |

|---|---|---|---|---|---|

| 1M | $4 | $1 | $18 | <$1 | $2 |

| 10M | $39 | $14 | $33 | $4 | $16 |

| 100M | $391 | $141 | $184 | $41 | $158 |

| 500M | $1,838 | $685 | $853 | $205 | $790 |

| 1B | $3,443 | $1,340 | $1,689 | $410 | $1,580 |

Without caching, CloudFront + ALB breaks even with API Gateway REST around 10-20 million requests per month. On raw per-request cost alone? Never beats API Gateway HTTP API. The advantage comes from two places: CloudFront caching (eliminates origin requests entirely) and operational capabilities (WAF, DDoS protection, edge TLS; stuff you'd otherwise pay for separately or can't get at all through API Gateway).

Now add caching. 80% cache hit ratio is realistic for read-heavy APIs. Product catalogs, config data, public content. Only 20% of requests actually reach ALB and Lambda. At 500 million requests per month that drops CloudFront + ALB from $853 to roughly $648. Cheaper than HTTP API's $685. Higher hit ratio, more savings. I've run APIs at 90%+ hit ratios. The numbers get very compelling at that point.

| Hidden Cost Factor | Impact | Notes |

|---|---|---|

| CloudFront caching | Reduces origin costs 50-90% | Every cache hit eliminates ALB and Lambda charges entirely |

| ALB health checks | $0.50-2/month per target group | Each health check invokes Lambda; use longer intervals |

| Provisioned Concurrency | $0.0000041667/GB-second | Eliminates cold starts but adds steady-state cost |

| CloudFront real-time logs | $0.01 per 1M log lines | Optional; standard logs are free but delayed |

| WAF rules | $5/month per web ACL + $1/rule + $0.60/M requests | Same cost regardless of attachment point |

| Data transfer (ALB to Lambda) | Free | Lambda invocation within the same region incurs no data transfer charge |

Comparison With Alternatives

Five distinct paths to invoke a Lambda function over HTTP now. Different trade-offs on each one.

| Capability | REST API | HTTP API | CF + ALB | Function URL | CF + Function URL |

|---|---|---|---|---|---|

| Max timeout | 29s | 30s | 15 min | 15 min | 15 min |

| Max request payload | 10 MB | 10 MB | 1 MB | 6 MB | 6 MB |

| Max response payload | 10 MB | 10 MB | 1 MB | 6 MB | 6 MB |

| Response streaming | No | No | No | Yes | Yes |

| WebSocket | Yes (dedicated type) | No | No | No | No |

| Built-in caching | Yes (REST only) | No | Yes (CloudFront) | No | Yes (CloudFront) |

| WAF support | Yes (auto-CF on edge) | No | Yes (CF and/or ALB) | No | Yes (CloudFront) |

| DDoS protection | Shield Standard | None | Shield Standard (CF) | None | Shield Standard (CF) |

| Custom domain + TLS | Yes | Yes | Yes | Yes (partial) | Yes |

| Auth offloading | Authorizers, IAM, Cognito | Authorizers, IAM, JWT | ALB Cognito/OIDC | IAM only | IAM only |

| Request transformation | Yes (VTL mapping) | No | No | No | No |

| Request validation | Yes | No | No | No | No |

| Multi-function routing | Yes | Yes | Yes | No (single function) | No (single function) |

| Per-route throttling | Yes | No | No | No | No |

| Usage plans / API keys | Yes | No | No | No | No |

| Regional throttle limit | 10K RPS (shared) | 10K RPS (shared) | None | None | None |

| mTLS | Yes | Yes | Yes (CF to ALB) | No | No |

| gRPC | No | Yes | No (Lambda targets) | No | No |

| OpenAPI import | Yes | Yes | No | No | No |

| Canary deployments | Yes | No | Weighted target groups | Alias routing | Alias routing |

| Pricing model | Per-request | Per-request | LCU + fixed + per-request (CF) | Per-request (Lambda only) | Per-request (Lambda + CF) |

When to use each path:

- API Gateway REST API. You need request validation, VTL transformations, usage plans, or WebSocket support. Keep volume under 100M requests/month or the bill gets ugly.

- API Gateway HTTP API. Simplest, cheapest API Gateway option. JWT auth built in. Good default for greenfield.

- CloudFront + ALB. Multi-function routing, caching, WAF, auth offloading, no timeout or throttle limits. Volume needs to justify the ALB fixed cost.

- Function URL. Single function, direct HTTP, minimal overhead. Webhooks. Internal service-to-service. Streaming. Dead simple.

- CloudFront + Function URL. Single function needing edge caching, WAF, or a custom domain. No multi-function routing. Simpler than ALB. No fixed cost.

Cold Starts and Performance

Straightforward. Also unforgiving. ALB invokes Lambda synchronously and waits. Cold start takes 3 seconds? User waits 3 seconds. No automatic retry on cold start latency. Same as API Gateway there. Health checks add a wrinkle, though.

Here's what burned me in production. Lambda goes cold. Health check fires. Times out because the cold start exceeds the timeout window. Enough consecutive failures and ALB marks the target unhealthy. Stops routing traffic. 503 errors until subsequent checks succeed. But the function needs traffic to stay warm. And the ALB stopped sending traffic because the function was cold. Deadlock.

Three mitigations:

- Increase the health check timeout to at least 10 seconds. Unhealthy threshold to 3. Give the function time to finish a cold start before ALB gives up.

- Increase the health check interval to 60-300 seconds. Fewer invocations, less cold start exposure. Yes, the function is more likely to be cold when a check arrives. Acceptable trade-off. Real user traffic keeps functions warm. Health checks don't.

- Use Provisioned Concurrency. Eliminates cold starts entirely. Keeps a specified number of execution environments initialized and ready. Health checks always hit a warm function. Problem solved.

Connection draining with Lambda targets is simpler than EC2. Deregister a Lambda from a target group during deployment and the ALB just completes in-flight invocations. No persistent connections to drain. Set deregistration delay slightly longer than your function's max execution time. 30-60 seconds for most API workloads.

Security Architecture

Five security layers. Each one addresses a different threat category.

Preventing ALB bypass. Most critical security concern here. Your ALB has a public DNS name by default. Anyone can call it directly. Skip CloudFront, skip WAF, skip geo-restrictions, skip all edge security. I've watched pen testers find exposed ALBs within minutes. Two layers of defense.

First: configure the ALB's security group to allow inbound traffic only from the CloudFront managed prefix list (com.amazonaws.global.cloudfront.origin-facing). AWS-managed list of IP ranges. Only CloudFront can reach your ALB at the network layer.

Second: add a custom origin header in CloudFront origin configuration (X-Origin-Verify: <secret-value>) and validate it in your ALB listener rules or Lambda. Even if an attacker routes traffic from CloudFront IP ranges somehow, they can't forge the secret header. Rotate periodically with Secrets Manager.

ALB native authentication. Underappreciated feature. ALB authenticates users against Cognito user pools or any OIDC provider (Okta, Auth0, Azure AD) before the request touches Lambda. Handles the full OAuth 2.0 / OIDC flow: redirect to provider, token exchange, token validation. Passes authenticated claims to Lambda in the x-amzn-oidc-data header. No auth logic in your function code. Entire class of security bugs gone.

| Permission Relationship | Resource | Principal | Purpose |

|---|---|---|---|

| CloudFront to ALB | ALB listener | CloudFront (via prefix list + custom header) | Allow CloudFront to forward requests to ALB |

| ALB to Lambda | Lambda function resource policy | elasticloadbalancing.amazonaws.com | Allow ALB to invoke the Lambda function |

| Lambda execution role | AWS services (DynamoDB, S3, etc.) | Lambda function | Allow Lambda to access backend resources |

| WAF to CloudFront | CloudFront distribution | WAF web ACL | Attach WAF rules to the distribution |

| ALB to Cognito | Cognito user pool | ALB | Allow ALB to authenticate users against Cognito |

Limitations and Gotchas

Constraints. Several of them. Design around them from the start or pay later.

| Limitation | Constraint | API Gateway Equivalent | Impact |

|---|---|---|---|

| Request payload | 1 MB | 10 MB | The single biggest constraint; affects file uploads, large JSON bodies |

| Response payload | 1 MB | 10 MB | Limits large API responses; pagination becomes mandatory |

| No WebSocket | Lambda targets only support HTTP | REST API supports WebSocket API type | Use API Gateway WebSocket API or AppSync for real-time |

| No response streaming | ALB waits for complete Lambda response | API Gateway also does not stream | Use Function URLs if streaming is required |

| No request validation | Must validate in Lambda code | REST API has built-in JSON schema validation | Move validation to Lambda or a shared middleware layer |

| No request transformation | Must transform in Lambda code | REST API has VTL mapping templates | Implement in Lambda; arguably cleaner than VTL anyway |

| No usage plans or API keys | Not available | REST API has full usage plan support | Implement in Lambda or use a third-party API management layer |

| No OpenAPI import | Must configure listener rules manually or via IaC | Both REST and HTTP APIs support OpenAPI import | Use CloudFormation, CDK, or Terraform for rule management |

| No per-route throttling | ALB does not throttle | REST API supports per-method throttling | Implement in Lambda or use WAF rate-based rules per path |

| No built-in canary | Must use weighted target groups | REST API supports canary deployments | Use ALB weighted target groups with Lambda aliases |

Multi-value headers. Another quiet failure. Forget to enable multi-value header support on the target group and Lambda silently receives only the last value of any repeated header. Missing cookies. Broken CORS when Access-Control-Allow-Origin appears in both origin and ALB. Wrong query parameters. Fix takes 30 seconds (flip the flag). Finding the problem takes hours. Nothing errors out. Data just vanishes.

Response streaming. Doesn't exist for Lambda behind ALB. ALB buffers the complete Lambda response before sending anything to the client. Need server-sent events? Chunked transfer encoding? Lambda response streaming? Function URLs with InvokeWithResponseStream. That's your only option.

Infrastructure as Code

More AWS resources than API Gateway. That's the trade-off. Each resource is independently manageable. The total count is just higher. Plan your IaC accordingly.

| Resource | Purpose | Key Configuration |

|---|---|---|

| CloudFront distribution | Edge caching, WAF, TLS termination | Origin pointing to ALB DNS name, cache behaviors per path pattern |

| CloudFront origin request policy | Controls which headers/cookies reach ALB | Forward only necessary headers to maximize cache hit ratio |

| CloudFront cache policy | Defines cache key composition | Varies per path: caching for reads, disabled for writes |

| WAF web ACL | Request filtering and rate limiting | Attach to CloudFront distribution; managed rule groups recommended |

| ALB | Request routing to Lambda targets | Internet-facing or internal; HTTPS listener with ACM certificate |

| ALB listener | TLS termination and rule evaluation | HTTPS on port 443; default action returns 404 or routes to catch-all |

| ALB listener rules | Path/host routing to target groups | One rule per route pattern; priority ordering matters |

| ALB target groups | Lambda function registration | One target group per Lambda function; multi-value headers enabled |

| Security group | Network-level access control | Inbound HTTPS from CloudFront managed prefix list only |

| Lambda functions | Business logic | Handler must return ALB-compatible response format |

| Lambda resource policies | Authorization for ALB invocation | Allow elasticloadbalancing.amazonaws.com to invoke each function |

Compare that to API Gateway. Single AWS::ApiGateway::RestApi or AWS::ApiGatewayV2::Api resource gives you routing, throttling, TLS. Done.

With this pattern you manage the routing layer yourself. Full control over listener rules, target group weights, health check parameters. All independently modifiable without redeploying your entire API. I prefer that control. More resources to wrangle in CloudFormation or Terraform, though. No getting around it.

Common Failure Modes

| Failure Mode | Symptom | Root Cause | Mitigation |

|---|---|---|---|

| ALB returns 502 | Bad Gateway error to client | Lambda function returned an invalid response (wrong JSON structure, missing statusCode) | Validate Lambda response format; log raw responses during development |

| ALB returns 503 | Service Unavailable | All Lambda targets marked unhealthy; or Lambda throttled by concurrency limit | Increase health check tolerance; increase Lambda reserved concurrency |

| ALB returns 504 | Gateway Timeout | Lambda execution exceeded ALB idle timeout (default 60s) | Increase ALB idle timeout; optimize Lambda execution time |

| Intermittent 403 from CloudFront | Forbidden errors on some requests | WAF rule blocking legitimate traffic; or custom origin header mismatch after rotation | Review WAF logs; coordinate header rotation between CloudFront and ALB |

| Cold start health check failures | Target flaps between healthy and unhealthy | Health check timeout shorter than cold start duration | Increase health check timeout and unhealthy threshold; use Provisioned Concurrency |

| Missing cookies in response | Set-Cookie headers lost | Multi-value headers not enabled on target group | Enable multi_value_headers.enabled on the target group |

| Cache serving stale data | Users see outdated content after Lambda update | CloudFront TTL longer than acceptable staleness | Use cache invalidation on deploy; or use short TTLs with stale-while-revalidate |

| CloudFront bypass | Attackers call ALB directly, skipping WAF | Security group allows traffic from non-CloudFront IPs | Restrict security group to CloudFront managed prefix list; validate custom origin header |

| 1 MB payload rejection | Large requests or responses fail silently | Request or response exceeds ALB Lambda payload limit | Implement presigned URL pattern for large payloads; add payload size validation early in the request |

Key Architectural Recommendations

Built and operated this pattern across multiple production systems. Ten recommendations I keep coming back to.

- Use this pattern when monthly volume exceeds 30 million requests and you need routing, caching, or WAF. Below that? API Gateway HTTP API. Simpler. Cheaper. Above it, ALB's LCU pricing and CloudFront caching deliver real savings.

- Always put CloudFront in front of the ALB. ALB serving Lambda without CloudFront gives you routing. That's it. No caching. No WAF. No DDoS protection. No edge TLS. CloudFront adds all of that. Per-request cost often offset by cache hits alone.

- Lock down the ALB. CloudFront managed prefix list plus custom origin header. Security group blocks network-level bypass. Custom header blocks application-level bypass. Both. Always.

- Enable multi-value headers on every Lambda target group. Zero downside. Silently dropping duplicate headers will cost you hours of debugging. I know this firsthand.

- Health check intervals: 60 seconds or longer for Lambda targets. Default 35-second interval generates unnecessary invocations. Increases cold start exposure. Real traffic keeps functions warm. Health checks don't.

- Provisioned Concurrency for latency-sensitive endpoints. Eliminates cold starts. Prevents health check flapping. Predictable performance. Cost is modest relative to reliability improvement. On-call engineers will thank you.

- Design for the 1 MB payload limit from day one. Presigned S3 URLs for uploads. Pagination for large responses. Compressed payloads. Don't discover this limit at 2 AM in production.

- Consolidate APIs onto a single ALB. Host-based and path-based routing. Pay the ALB fixed cost once. Multiple target groups cost nothing extra beyond LCU consumption. Separate API Gateways per service? Costs significantly more.

- CloudFront + Function URLs for single-function use cases. One Lambda function needing edge caching and WAF? No multi-function routing needed? Skip the ALB. Simpler. No fixed cost.

- Three metrics to monitor: CloudFront cache hit ratio, ALB target response time, Lambda concurrent executions. Cache hit ratio tells you origin traffic savings. Target response time reveals cold starts. Concurrent executions warns you before hitting Lambda's regional concurrency limit. Everything else is secondary.