Most teams ignore Step Functions until they find themselves writing ad-hoc state management code inside Lambda functions, chaining queues together with brittle retry logic, or building homegrown saga coordinators that nobody wants to maintain. The service is a fully managed state machine engine that coordinates distributed components (Lambda functions, ECS tasks, DynamoDB operations, SQS messages, human approvals, and over two hundred other AWS service actions) through a declarative JSON-based workflow definition. I have spent years building production orchestration on Step Functions: ETL pipelines processing billions of records, saga-based transaction systems spanning dozens of microservices, real-time data enrichment at tens of thousands of events per second. This article captures what I have learned about the internals, the trade-offs, the failure modes, and the patterns that survive contact with production traffic.

What Step Functions Actually Is

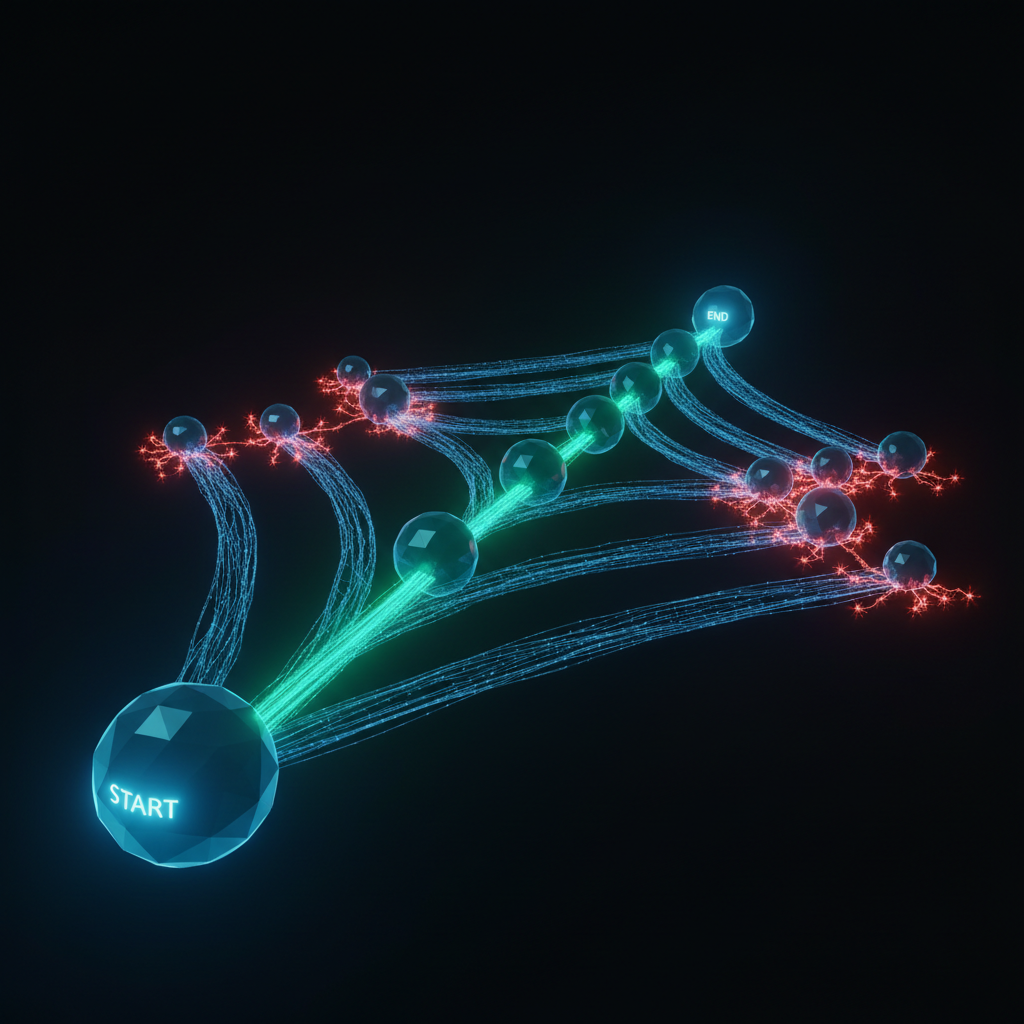

Step Functions is a managed state machine engine. You define a workflow as a set of states and transitions using the Amazon States Language (ASL), and the service executes that definition: state persistence, retries, error handling, parallelism, execution history. All managed. Every Step Functions workflow is a finite state machine with a defined set of states, transitions, input and output processing, and terminal conditions. The runtime transitions from state to state, performs work at each one (invoking a Lambda function, writing to DynamoDB, waiting for a callback), and passes data through the execution.

That distinction matters more than people think. Step Functions is a state machine, not a general-purpose workflow engine like Apache Airflow (a DAG scheduler with a Python programming model). Formal semantics. Declarative definition language. Execution guarantees that derive from the state machine model itself. You define what should happen; the engine handles how, when, and in what order.

The real payoff is separating orchestration logic from business logic. Without Step Functions, "what happens next" is scattered across Lambda functions, queue consumers, and application code. Each component knows about the next component in the chain, handles its own retries, manages its own state, propagates errors upstream. It is a mess at scale. Step Functions pulls all that orchestration into the state machine definition. Each component does its own job and nothing more.

Three practical consequences fall out of this separation:

- Visibility. Step Functions shows a visual representation of every execution: which state is active, which succeeded or failed, the exact input and output of every transition. When an order processing workflow fails at step seven of twelve, you see exactly what happened. No searching through CloudWatch logs across six Lambda functions.

- Reliability. Exactly-once execution semantics (for Standard workflows), durable state checkpointing, configurable retry policies with exponential backoff, error catch blocks. All declarative. You declare the behavior you want and the runtime delivers it.

- Maintainability. Adding a new step to a workflow means adding a state to the ASL definition, not modifying multiple Lambda functions to pass data through a new link in the chain. Removing a step, reordering steps, or adding conditional branching are all changes to the workflow definition rather than changes to business logic code.

Workflow Studio in the Step Functions console lets you build and modify state machines graphically: drag and drop states, configure integrations, preview the ASL definition in real time. Useful for prototyping and for making sense of someone else's workflow. For production systems, I manage ASL definitions in code (CDK or Terraform) and treat the visual designer as a read-only debugging tool.

Where Step Functions Fits

Step Functions occupies the orchestration layer of a serverless architecture. If Lambda is the compute primitive, EventBridge is the event bus, SQS is the queue, and DynamoDB is the database, then Step Functions is the coordinator that ties these primitives together into coherent business processes.

The alternatives in AWS for orchestration are:

| Approach | Strengths | Weaknesses |

|---|---|---|

| Step Functions | Declarative, visual, built-in error handling, exactly-once semantics, native AWS integrations | ASL learning curve, state transition costs, 256 KB payload limit |

| EventBridge + Lambda | Loosely coupled, event-driven, scales independently | No built-in state tracking, error handling is manual, hard to reason about execution flow |

| SQS + Lambda | Simple, reliable, natural backpressure | Sequential only, no branching or parallelism, error handling via DLQ only |

| Lambda chaining | Simple to implement initially | No error recovery, no state tracking, tight coupling, cascading timeouts |

| MWAA (Managed Airflow) | Python-native, mature scheduling, rich operator ecosystem | Server-based (not serverless), slower cold start, overkill for event-driven work |

Standard vs. Express Workflows

Step Functions offers two workflow types. They differ architecturally, not just in pricing. Get this choice wrong and you will either overpay by two orders of magnitude or lack the durability guarantees your system requires.

Standard Workflows

Standard workflows are durable, exactly-once state machines. Every state transition is persisted to an internal data store before the next state begins. If the Step Functions service itself has an infrastructure failure mid-execution, it recovers from the last persisted state and continues without re-executing completed states. The execution history (every state entry, exit, input, output, and error) is retained for 90 days, queryable through both the console and the API.

Exactly-once means each state executes one time. Period. If a Lambda function is invoked by a Standard workflow Task state, that function fires once and only once for that state transition. The persistence model enforces this: record the state transition, invoke the service, record the result, then transition. If the invocation fails, retry/catch logic handles it. If infrastructure fails after invocation but before recording the result, the runtime detects the gap and avoids re-execution.

Express Workflows

Express workflows are ephemeral, high-throughput state machines built for event processing. No durable state persistence. The entire execution runs in memory, and the final result is optionally logged to CloudWatch Logs. You trade durability and exactly-once semantics for dramatically higher throughput and lower cost.

Express workflows come in two invocation modes:

| Mode | Behavior | Semantics | Use Case |

|---|---|---|---|

| Asynchronous | Fire-and-forget; returns immediately with execution ARN | At-least-once | Event-driven processing, fire-and-forget pipelines |

| Synchronous | Caller blocks until workflow completes; result returned directly | At-most-once | API Gateway backend, request/response patterns |

Synchronous Express workflows shine as API Gateway backends. API Gateway invokes the workflow, waits for completion (up to 29 seconds, constrained by API Gateway's integration timeout), and returns the workflow output as the HTTP response. You get multi-step orchestration behind a single HTTP endpoint. I use this pattern heavily for API composition where one request needs to fan out to several services.

Detailed Comparison

| Characteristic | Standard | Express (Async) | Express (Sync) |

|---|---|---|---|

| Maximum duration | 1 year | 5 minutes | 5 minutes |

| Execution semantics | Exactly-once | At-least-once | At-most-once |

| State persistence | Every transition durably checkpointed | In-memory only | In-memory only |

| Execution history | 90-day retention, queryable via API and console | CloudWatch Logs only | CloudWatch Logs only |

| Execution start rate | 2,000/sec (default, soft limit) | 100,000/sec (default) | Depends on caller |

| State transitions/sec | 4,000/sec per account (soft limit) | Nearly unlimited | Nearly unlimited |

| Pricing model | $0.025 per 1,000 state transitions | $1.00 per 1M requests + $0.00001667/GB-sec | $1.00 per 1M requests + $0.00001667/GB-sec |

| Supported integration patterns | All (.sync, .waitForTaskToken, request-response) | Request-response only | Request-response only |

| Execution deduplication | Yes (via execution name) | No | No |

| Redrive (restart from failure) | Yes | No | No |

| Activity tasks | Yes | No | No |

| Visual debugging in console | Full execution graph and event history | No (CloudWatch Logs Insights only) | No |

When to Use Which

Use Standard when:

- Execution duration exceeds 5 minutes

- You need exactly-once semantics (financial transactions, order processing, inventory management)

- You need

.syncor.waitForTaskTokenintegrations (waiting for ECS tasks, Glue jobs, human approvals) - You need execution history for auditing, debugging, or compliance

- You need execution deduplication (idempotent starts via execution name)

- You need the ability to redrive failed executions from the point of failure

Use Express when:

- Processing high-volume events (IoT telemetry, streaming data enrichment, API request orchestration)

- Execution completes in under 5 minutes

- Idempotent processing is acceptable (at-least-once for async, at-most-once for sync)

- Cost is a primary concern (Express is 10-250x cheaper for high-volume, short-duration workflows)

- You need throughput beyond Standard's 2,000 executions/second soft limit

A common production pattern combines both: a Standard workflow orchestrates the overall business process (order fulfillment, for example), and individual high-throughput steps within it invoke Express workflows for short-lived sub-tasks (data enrichment, validation fan-out).

Architecture Internals

Knowing how Step Functions executes state machines internally lets you predict performance, cost, and failure behavior before you hit production.

The Execution Engine

For Standard workflows, the execution engine operates on a durable checkpoint-and-proceed model:

- Execution start. When you call

StartExecution, the engine creates an execution record and assigns a unique execution ARN. The initial input is persisted. - State transition. At each state boundary, the engine durably persists the current state, input, output, and transition metadata before proceeding to the next state. This checkpoint is the foundation of the exactly-once guarantee: if the engine fails mid-execution, it recovers from the last checkpoint.

- Task execution. For Task states, the engine invokes the target service (Lambda, ECS, DynamoDB, etc.) and waits for a response. The engine manages the timeout, retry, and catch logic according to the ASL definition. For

.syncintegrations, the engine polls the target service for completion. For.waitForTaskToken, the engine pauses and waits for an external callback. - Completion. When the state machine reaches a terminal state (Succeed, Fail, or the last state with no

Nextfield), the engine records the final output and marks the execution as complete or failed.

Express workflows are a different animal. No durable checkpointing between states. The entire execution runs in memory on a single host. Fast and cheap, yes. But if the host goes down mid-execution, the execution is gone. No recovery. No execution history to query after the fact.

The Scheduler and Latency

The scheduler determines when to execute the next state. For Standard workflows, it processes state transitions from a durable queue, which adds a small but measurable latency: typically 50-200ms per state transition. That overhead is the cost of durable checkpointing, and it accumulates. A 10-state Standard workflow burns 0.5-2 seconds in pure scheduler overhead before any actual work happens.

For Express workflows, the scheduler operates in-process; state transitions happen in memory with negligible overhead (sub-millisecond). This is why Express workflows have significantly lower end-to-end latency for multi-step workflows and are the better choice for latency-sensitive request/response patterns.

State Persistence

Standard workflow state persistence uses an internal, highly durable data store (built on DynamoDB-class infrastructure). Each state transition generates multiple persistence operations: the event log entry, the state snapshot, and the transition metadata. This persistence is what enables:

- Exactly-once semantics: The engine can detect and deduplicate operations that completed but were not acknowledged

- Execution history: Every detail of every state transition is available for 90 days

- Redrive: Failed executions can be restarted from the exact point of failure

- Recovery: Infrastructure failures do not lose execution progress

The trade-off is latency and cost. Each state transition costs $0.000025 and adds 50-200ms of overhead. For workflows where these costs are significant (high volume, low latency), Express workflows eliminate them entirely.

Control Plane vs. Data Plane

Like most AWS services, Step Functions separates its control plane from its data plane:

| Plane | Operations | Characteristics |

|---|---|---|

| Control plane | CreateStateMachine, UpdateStateMachine, DeleteStateMachine, DescribeStateMachine, ListStateMachines | Eventually consistent, own rate limits, manages definitions |

| Data plane | StartExecution, DescribeExecution, GetExecutionHistory, SendTaskSuccess, SendTaskFailure, SendTaskHeartbeat | Highly available, processes executions, handles callbacks |

This separation matters when things break. A control plane issue does not affect running executions; the data plane keeps processing with the last deployed definitions. You just cannot deploy updates until the control plane recovers.

A gotcha that has bitten me: UpdateStateMachine is eventually consistent. Update a state machine definition and immediately start an execution, and that execution may use the old definition. In my deployment pipelines, I add a 5-10 second delay after the update before starting any test or production executions.

Amazon States Language (ASL)

ASL is the JSON-based DSL that defines state machine behavior. Most teams underestimate how much you can do in pure ASL without writing Lambda code. The data flow model is where the real leverage lives.

State Types

| State Type | Purpose | Common Use Cases |

|---|---|---|

| Task | Execute work by invoking a service integration | Lambda invocation, DynamoDB read/write, SQS send, ECS run task, Glue job, SageMaker training |

| Pass | Pass input to output, optionally transforming data | Inject fixed values, restructure payloads, mock states during development |

| Wait | Pause execution for a duration or until a timestamp | Rate limiting, scheduled delays, polling intervals |

| Choice | Branch based on input conditions | If/else routing, switch/case logic, conditional workflow paths |

| Parallel | Execute multiple branches concurrently | Fan-out to independent processing paths, parallel API calls |

| Map | Iterate over a collection, executing states for each item | Process each record in an array, batch item processing, large-scale parallel ETL |

| Succeed | Mark execution as successful (terminal state) | Explicit success endpoint |

| Fail | Mark execution as failed with error and cause (terminal state) | Explicit failure with structured error information |

Input/Output Processing

Every state in ASL has a data flow pipeline that controls how data enters the state, how results are combined with the input, and what passes to the next state. This is where teams get confused, and where I spent most of my early debugging time.

The processing order is:

| Stage | Purpose | Operates On | Default |

|---|---|---|---|

| 1. InputPath | Select a subset of the state input | Raw state input | $ (entire input) |

| 2. Parameters | Construct a new JSON object as effective input | Selected input from InputPath | None (pass through) |

| 3. (State executes) | The state performs its work | Effective input | N/A |

| 4. ResultSelector | Reshape the raw result from the state | Raw task result | None (pass through) |

| 5. ResultPath | Place the result relative to the original input | Original input + shaped result | $ (replace input with result) |

| 6. OutputPath | Select a subset as the final output | Combined input+result | $ (pass everything) |

Here is what trips up nearly every engineer I have worked with: ResultPath determines where the result is placed in the state's original input. Setting "ResultPath": "$.taskResult" inserts the task result at $.taskResult and preserves the entire original input alongside it. This is how you accumulate data across multiple states without losing context.

A common mistake is confusing ResultPath with OutputPath. ResultPath controls where the result lands in the combined document. OutputPath then selects what portion of that combined document passes to the next state. They work in sequence, not as alternatives.

InputPath selects a portion of the state input using a JSONPath expression. Setting "InputPath": "$.order" means the state only sees the order field from the input. Setting "InputPath": null discards all input; the state receives an empty object.

Parameters constructs the effective input using a combination of static values and references to the input. Fields ending in .$ are evaluated as JSONPath expressions or intrinsic functions:

"Parameters": {

"TableName": "orders",

"Key": {

"orderId": { "S.$": "$.order.id" }

},

"StaticValue": "fixed-string",

"ExecutionId.$": "$$.Execution.Id"

}

ResultSelector reshapes the raw result from the service invocation. This is essential when a service returns a verbose response and you only need a few fields. Without it, large responses bloat the execution data and push you toward the 256 KB payload limit.

ResultPath determines placement:

"ResultPath": "$.result": Nest the result under$.result, preserving original input"ResultPath": "$"(default): Replace the entire input with the result. Original input is lost."ResultPath": null: Discard the result entirely. Output equals the original input, unchanged.

In most workflows, I explicitly set ResultPath to nest the result alongside the input. The default behavior of replacing the input is rarely what you want, because downstream states typically need both the result and the original context.

Intrinsic Functions

ASL provides intrinsic functions for data transformation within Parameters and ResultSelector, eliminating the need for Lambda functions that exist solely to do minor data manipulation:

| Function | Purpose | Example Use Case |

|---|---|---|

States.Format | String interpolation with {} placeholders | Construct S3 keys, build messages |

States.StringToJson | Parse a JSON string into an object | Process stringified JSON from SQS |

States.JsonToString | Serialize an object to a JSON string | Prepare data for APIs requiring string input |

States.Array | Create an array from arguments | Build parameter lists |

States.ArrayPartition | Split an array into chunks of size N | Prepare batches for processing |

States.ArrayContains | Check if array contains a value | Conditional logic in Choice states |

States.ArrayRange | Generate a numeric range array | Create iteration sequences |

States.ArrayGetItem | Get item by index | Extract specific elements |

States.ArrayLength | Get array length | Conditional logic based on collection size |

States.ArrayUnique | Deduplicate an array | Remove duplicates before processing |

States.Base64Encode | Base64 encode a string | Prepare payloads for certain APIs |

States.Base64Decode | Base64 decode a string | Process base64-encoded data |

States.Hash | Hash a string (MD5, SHA-1, SHA-256, SHA-384, SHA-512) | Generate checksums, partition keys |

States.JsonMerge | Shallow merge two JSON objects | Combine configuration with runtime data |

States.MathRandom | Generate random number in a range | Sampling, jitter, random selection |

States.MathAdd | Add two numbers | Increment counters, compute offsets |

States.StringSplit | Split a string by delimiter | Parse delimited data |

States.UUID | Generate a UUID v4 | Create unique identifiers for records |

I remember writing Lambda functions just to concatenate strings or generate UUIDs. Each one added 100-500ms of cold start latency, Lambda invocation cost, and a deployment artifact to maintain. Intrinsic functions killed that entire category of glue code, and good riddance.

Context Object

The context object ($$) provides execution metadata accessible from Parameters and ResultSelector:

| Path | Value |

|---|---|

$$.Execution.Id | The execution ARN |

$$.Execution.Name | The execution name |

$$.Execution.StartTime | ISO 8601 timestamp of execution start |

$$.Execution.Input | The original execution input |

$$.Execution.RoleArn | The execution role ARN |

$$.State.Name | The current state name |

$$.State.EnteredTime | ISO 8601 timestamp of state entry |

$$.State.RetryCount | Current retry attempt (0-based) |

$$.StateMachine.Id | The state machine ARN |

$$.StateMachine.Name | The state machine name |

$$.Task.Token | The task token (only in .waitForTaskToken states) |

$$.Map.Item.Index | Current Map iteration index |

$$.Map.Item.Value | Current Map iteration value |

I routinely pass $$.Execution.Id to Lambda functions so that application logs can be correlated back to the specific Step Functions execution. This is essential for debugging production issues. When a customer reports a problem, you need to trace from the Lambda logs to the execution history and back.

Service Integrations

Step Functions integrates directly with over 220 AWS services. The integration patterns (how the workflow interacts with each service) dictate your architecture more than most teams realize. The distinction between optimized integrations, SDK integrations, and the three invocation patterns deserves close attention.

Optimized vs. SDK Integrations

Optimized integrations are purpose-built for specific, commonly-used services. They offer natural parameter mapping, structured results, and support for all three invocation patterns (.sync, .waitForTaskToken, and request-response where applicable).

SDK integrations use the generic AWS SDK to call any action on any AWS service. The resource ARN format is arn:aws:states:::aws-sdk:serviceName:apiAction. Request-response pattern only. PascalCase parameter names matching the raw AWS SDK. If an AWS service has an API, Step Functions can call it directly. No Lambda intermediary needed.

Key Optimized Integrations

| Service | Common Actions | .sync Support | .waitForTaskToken | Notes |

|---|---|---|---|---|

| Lambda | Invoke | Yes | Yes | Most common integration; 15-min max for sync |

| DynamoDB | GetItem, PutItem, DeleteItem, UpdateItem, Query | N/A (instant) | N/A | Direct data operations without Lambda |

| SQS | SendMessage | N/A | Yes | Send with task token for callback patterns |

| SNS | Publish | N/A | Yes | Notify subscribers with task token |

| ECS/Fargate | RunTask | Yes | Yes | Run containers; wait for completion |

| AWS Batch | SubmitJob | Yes | N/A | Submit compute jobs; wait for completion |

| Glue | StartJobRun | Yes | N/A | Run ETL jobs; wait for completion |

| SageMaker | CreateTrainingJob, CreateTransformJob, CreateEndpoint | Yes | N/A | ML pipeline orchestration |

| CodeBuild | StartBuild | Yes | N/A | CI/CD pipeline orchestration |

| Athena | StartQueryExecution | Yes | N/A | Run SQL queries; wait for results |

| EventBridge | PutEvents | N/A | N/A | Emit events for event-driven architectures |

| Step Functions | StartExecution | Yes | N/A | Nest or chain state machines |

A pattern I use frequently: direct DynamoDB integration from Step Functions to read configuration, write status records, or perform conditional updates without routing through a Lambda function. Each Lambda invocation you eliminate removes 100-500ms of latency and the associated Lambda cost. For simple data operations, the direct integration is faster, cheaper, and has fewer moving parts.

Invocation Patterns

The three invocation patterns are architecturally distinct:

Request-Response (default): Step Functions calls the service API and immediately transitions to the next state with whatever the API returns. The workflow does not wait for any asynchronous process to complete.

- Use when: The API call itself is the work (sending a message, writing a record, publishing an event)

- Resource format:

arn:aws:states:::sqs:sendMessage

Run a Job (.sync): Step Functions calls the service, then polls or listens for the job to complete before transitioning. The runtime handles the polling internally, so you do not need a Wait/Choice polling loop in your ASL.

- Use when: You need to wait for an asynchronous job to finish (ECS task, Glue job, Batch job, Athena query, SageMaker training)

- Resource format:

arn:aws:states:::ecs:runTask.sync - Important: Only available in Standard workflows

Wait for Callback (.waitForTaskToken): Step Functions generates a unique task token, sends it to the target service, and pauses the execution indefinitely. An external process must call SendTaskSuccess or SendTaskFailure with the token to resume the workflow.

- Use when: Work is performed by a human, an external system, or a process that cannot be polled (human approval, third-party webhook, cross-account coordination)

- Resource format:

arn:aws:states:::sqs:sendMessage.waitForTaskToken - Important: Only available in Standard workflows

| Pattern | Workflow waits? | Who signals completion? | Express support |

|---|---|---|---|

| Request-Response | No | N/A (immediate) | Yes |

| .sync | Yes (managed polling) | Step Functions polls the service | No |

| .waitForTaskToken | Yes (indefinite pause) | External caller via SendTaskSuccess/Failure | No |

The .sync and .waitForTaskToken restrictions on Express workflows are a key architectural constraint. If your workflow needs to wait for a Glue job, an ECS task, or a human approval, you must use a Standard workflow for at least that portion of the orchestration.

Error Handling

Declarative error handling is why I reach for Step Functions over manual orchestration every time. Retry and Catch blocks give you sophisticated error recovery without procedural error-handling code.

Error Types

| Error | Source | When It Occurs |

|---|---|---|

States.ALL | Catch-all | Matches any error (wildcard) |

States.TaskFailed | Step Functions | A Task state failed for any reason |

States.Timeout | Step Functions | A state exceeded its TimeoutSeconds or HeartbeatSeconds |

States.HeartbeatTimeout | Step Functions | A task failed to send a heartbeat within HeartbeatSeconds |

States.Permissions | Step Functions | Insufficient IAM permissions for the task |

States.ResultPathMatchFailure | Step Functions | ResultPath could not be applied to the state input |

States.ParameterPathFailure | Step Functions | A reference path in Parameters did not match the input |

States.BranchFailed | Step Functions | A branch in a Parallel or Map state failed |

States.NoChoiceMatched | Step Functions | No condition in a Choice state matched and no Default specified |

States.IntrinsicFailure | Step Functions | An intrinsic function call failed |

States.ExceedToleratedFailureThreshold | Step Functions | A Map state exceeded its tolerated failure threshold |

States.ItemReaderFailed | Step Functions | A Distributed Map could not read items from S3 |

Lambda.ServiceException | Lambda service | Lambda service error (5xx) |

Lambda.SdkClientException | Lambda SDK | SDK client-side error |

Lambda.TooManyRequestsException | Lambda | Lambda throttling (429) |

| Custom errors | Your code | Thrown by your Lambda function or returned by your service |

Retry Configuration

Every Task, Parallel, and Map state can define Retry policies with exponential backoff:

| Retry Parameter | Purpose | Default |

|---|---|---|

| ErrorEquals | List of error names to match | Required |

| IntervalSeconds | Initial delay before first retry | 1 |

| MaxAttempts | Maximum number of retries (0 disables retry) | 3 |

| BackoffRate | Multiplier applied to delay after each retry | 2.0 |

| MaxDelaySeconds | Cap on the retry delay after exponential backoff | None (unbounded) |

| JitterStrategy | Add randomness to prevent thundering herd ("FULL" or "NONE") | "FULL" |

The retry sequence for IntervalSeconds: 2, MaxAttempts: 4, BackoffRate: 2.0 would be: fail, wait ~2s, retry, fail, wait ~4s, retry, fail, wait ~8s, retry, fail, wait ~16s, retry, fall through to Catch. With JitterStrategy: "FULL", each delay is randomized between 0 and the calculated value, preventing thundering herd when multiple executions retry against the same downstream service simultaneously.

Retry blocks are evaluated in order; the first matching ErrorEquals handles the error. I put specific error handlers (Lambda throttling, service exceptions) before States.ALL so transient errors get more retry attempts than unknown errors. This ordering has saved me from countless false alarms in production.

Catch Blocks and Fallback States

When retries are exhausted or an error is not retried, Catch blocks route the execution to a fallback state:

"Catch": [

{

"ErrorEquals": ["States.TaskFailed"],

"Next": "HandleTaskFailure",

"ResultPath": "$.error"

},

{

"ErrorEquals": ["States.ALL"],

"Next": "HandleUnknownError",

"ResultPath": "$.error"

}

]

The ResultPath in a Catch block is critical. By setting "ResultPath": "$.error", the error information (error name and cause) is added to the original state input at the $.error path. The fallback state receives both the original context and the error details, which is essential for implementing compensating transactions, sending meaningful failure notifications, or routing to alternative processing paths.

Skip ResultPath in the Catch block and the entire state output gets replaced with error information. Your fallback state loses every bit of context it needs to handle the error. I learned this the hard way on a payment processing workflow.

In every production workflow I build, every Task state has both Retry and Catch blocks. No exceptions. Retries handle transient failures automatically. Catch blocks handle persistent failures by routing to compensation, notification, or cleanup logic. A workflow without Catch blocks on every Task state will eventually fail with an unhandled error, and your only recovery option is manual intervention or redrive. Neither is fun at 2 AM.

Heartbeat Timeouts

For long-running tasks (ECS tasks, SageMaker training jobs, callback-based tasks), HeartbeatSeconds requires the task to send periodic heartbeat signals via SendTaskHeartbeat. If no heartbeat is received within the interval, the task fails immediately with a States.HeartbeatTimeout error.

Without a heartbeat, a task that hangs (waiting for a resource, stuck in an infinite loop, crashed silently) goes undetected until the overall TimeoutSeconds expires. That could be hours. Days, even. With a 60-second heartbeat interval, a stuck task is detected within 60 seconds and retry or catch logic fires immediately.

Parallel and Map States

Parallel and Map states provide two different models for concurrent execution within a workflow.

Parallel State

The Parallel state executes multiple branches concurrently. Each branch is an independent sub-workflow (a chain of states), and all branches must complete before the Parallel state transitions to the next state. The output is an array containing the output of each branch, in the order the branches are defined.

Key architectural details:

- All branches start simultaneously. There is no dependency ordering between branches.

- The Parallel state fails if any branch fails (unless the error is caught by a Catch block on the Parallel state).

- Each branch receives the same input. The Parallel state's effective input is passed to every branch.

- The output is always an array. Even with two branches, the output is

[branch1Output, branch2Output]. - State transitions within all branches count toward the 25,000 history event limit for the parent execution.

Parallel is the fan-out/fan-in primitive in Step Functions. Use it when you have a fixed, known set of independent tasks to execute concurrently: validate an order AND check inventory AND verify payment simultaneously, then merge the results.

Map State (Inline Mode)

The inline Map state iterates over a collection in the state input, executing a set of states for each item within the parent execution.

| Map Parameter | Purpose | Default |

|---|---|---|

| ItemsPath | JSONPath to the array in the input | $ |

| MaxConcurrency | Maximum parallel iterations | 0 (unlimited) |

| ItemSelector | Transform each item before processing | None |

| ToleratedFailureCount | Number of failed items before the Map fails | 0 |

| ToleratedFailurePercentage | Percentage of failed items before the Map fails | 0 |

MaxConcurrency is a critical control. Setting it to 0 (unlimited) means Step Functions processes all items concurrently, which can overwhelm downstream services. For a Map state iterating over 1,000 items with each item invoking a Lambda function, unlimited concurrency means 1,000 concurrent Lambda invocations. If your account's Lambda concurrency limit is 1,000 (the default), you have consumed all of it for a single workflow execution. I recommend setting MaxConcurrency to a value that respects downstream service limits, typically 10-40 for Lambda-backed iterations.

Inline Map vs. Distributed Map

| Characteristic | Inline Map | Distributed Map |

|---|---|---|

| Maximum concurrency | 40 | 10,000 |

| Maximum items | Limited by execution history (25,000 events) | Unlimited (millions) |

| Item source | Array in state input (256 KB payload limit) | S3 objects (JSON, CSV, S3 inventory) or state input |

| Child execution | Runs within parent execution | Spawns child executions (Standard or Express) |

| Execution history | Part of parent execution's 25,000 limit | Each child has its own 25,000 limit |

| Result handling | In-memory, part of parent state output | Optional export to S3 via ResultWriter |

| ItemBatcher | Not supported | Supported (batch items for processing) |

| Failure tolerance | ToleratedFailureCount/Percentage supported | ToleratedFailureCount/Percentage supported |

Distributed Map

Distributed Map changed what Step Functions is. Before Distributed Map, Step Functions was a workflow orchestration tool. After it, Step Functions became a massively parallel batch processing engine that competes with purpose-built data processing services for a surprising number of workloads.

How It Works

When a Distributed Map state executes, the runtime:

- Reads items from the configured source: an S3 object (JSON array, CSV, JSON Lines), an S3 inventory manifest, or an array in the state input.

- Batches items (optional). If an

ItemBatcheris configured, items are grouped into batches. Each batch is passed as an array to a single child execution, amortizing invocation overhead. - Dispatches child executions. Step Functions starts a child workflow execution for each item or batch, up to the configured

MaxConcurrency(maximum 10,000 concurrent). - Manages concurrency. As child executions complete, new ones are dispatched until all items are processed.

- Collects results. Outputs from child executions are optionally written to S3 (via ResultWriter) or collected in the parent execution output (subject to the 256 KB limit).

Configuration Reference

| Parameter | Purpose | Recommendation |

|---|---|---|

| MaxConcurrency | Maximum parallel child executions | Start at 100, increase based on downstream capacity |

| ItemBatcher.MaxItemsPerBatch | Items per child execution | 10-100 for Lambda backends (amortize invocation overhead) |

| ItemBatcher.MaxInputBytesPerBatch | Maximum batch size in bytes | Stay under Lambda's 256 KB event payload limit |

| ToleratedFailureCount | Absolute number of failures allowed | Set based on acceptable data loss |

| ToleratedFailurePercentage | Percentage of failures allowed | 1-5% for best-effort batch processing |

| ResultWriter | S3 destination for child execution results | Always configure for large-scale jobs (avoids 256 KB output limit) |

| Child execution type | Standard or Express | Express for short tasks (much cheaper); Standard for tasks needing durability |

| ItemReader.Resource | Source type (S3 object, S3 inventory) | S3 for datasets larger than 256 KB |

S3 as Item Source

The ItemReader configuration allows Distributed Map to read input directly from S3, which is critical for batch processing where the dataset exceeds the 256 KB payload limit:

- JSON array in S3: The runtime reads a JSON file and iterates over the array elements

- CSV in S3: The runtime reads a CSV file, optionally using a header row for field names, and iterates over rows

- JSON Lines in S3: Each line is treated as a separate item

- S3 object inventory: The runtime iterates over objects in an S3 prefix, enabling processing of every object in a bucket (image thumbnailing, format conversion, metadata extraction)

Use Cases

- Large-scale ETL. Process millions of records from S3: read a CSV with 10 million rows, batch into groups of 100, transform each batch in Lambda, write results to a destination.

- S3 object processing. Use S3 inventory to process every object in a bucket: image resizing, video transcoding, metadata extraction, format conversion.

- Data validation. Validate millions of records against business rules, collecting errors for review with tolerated failure percentage.

- Monte Carlo simulations. Fan out thousands of independent simulations, collect results to S3, aggregate in a post-processing step.

- Backfill operations. Reprocess historical data by reading from S3 and applying updated business logic to each record.

Cost Comparison with Alternatives

| Approach | Concurrency | Error Handling | State Tracking | Approximate Cost per 1M Items |

|---|---|---|---|---|

| Distributed Map (Express children) | Up to 10,000 | Built-in retry, catch, tolerance | Full per-child history | ~$1-5 |

| Distributed Map (Standard children) | Up to 10,000 | Built-in retry, catch, tolerance | Full per-child history | ~$25-50 |

| SQS + Lambda | Up to reserved concurrency | DLQ, visibility timeout retry | None (build your own) | ~$1-3 |

| Lambda fan-out | Up to reserved concurrency | Manual (DLQ, custom logic) | None (build your own) | ~$2-5 |

| Glue Spark job | Worker-based (DPUs) | Spark retry semantics | Glue job metrics | ~$15-50 |

Distributed Map with Express child executions hits a sweet spot for embarrassingly parallel workloads. You get orchestration, error handling, state tracking, and failure tolerance out of the box. Compare that to the weeks of engineering it takes to build equivalent reliability with custom SQS-and-Lambda solutions.

Activity Tasks and Callback Patterns

Activity tasks and the .waitForTaskToken pattern let Step Functions reach outside its own execution engine: external systems, human approvers, on-premises workers, long-running processes that cannot fit into a request-response model.

Activity Tasks

An Activity is a Step Functions resource that represents work performed by an external worker. The interaction model is pull-based:

- You create an Activity resource and reference it in a Task state

- When the state machine reaches the Activity Task state, it pauses

- An external worker polls for tasks using

GetActivityTask - Step Functions returns the task input and a unique task token

- The worker processes the task and calls

SendTaskSuccess(with output) orSendTaskFailure(with error) - The state machine resumes with the result

Activities are appropriate when the worker is a long-running process (an EC2 instance, an on-premises server, a container running in your data center) rather than a serverless function invoked by Step Functions. The worker pulls work from Step Functions rather than being invoked by it.

Heartbeat timeouts are essential for Activity tasks. Without a heartbeat, if the worker crashes mid-processing, the state machine waits until the overall task timeout (which could be hours or days for a Standard workflow) before failing. With HeartbeatSeconds, the worker must periodically call SendTaskHeartbeat. If the heartbeat is missed, Step Functions fails the task immediately with States.HeartbeatTimeout, allowing retry or catch logic to execute.

The .waitForTaskToken Pattern

The .waitForTaskToken pattern is more flexible than Activity tasks and works with any service that can receive a task token and eventually call back to Step Functions.

| Pattern | Token Delivery | Callback Mechanism | Use Case |

|---|---|---|---|

| Human approval | SQS message or SNS notification with token | Approver's web app calls SendTaskSuccess | Order approval, expense authorization, content review |

| External API | Lambda sends token to third-party system | External system webhooks back to Step Functions | Partner integration, third-party processing |

| Long-running container | ECS task receives token as environment variable | Container calls SendTaskSuccess on completion | ML training, video encoding, large file processing |

| Cross-account coordination | SNS publishes token to another account | Other account's workflow calls SendTaskSuccess | Multi-account pipeline orchestration |

| Event-driven callback | EventBridge event with token | Subscriber processes and calls back | Asynchronous event processing with guaranteed completion |

The task token is a unique, opaque string generated by Step Functions for each execution of a .waitForTaskToken state. It must be stored securely and used exactly once. Calling SendTaskSuccess or SendTaskFailure with an expired or already-used token results in an error.

Implementation best practices:

- Store task tokens durably. Persist tokens in DynamoDB with a TTL matching the task timeout. If the callback application restarts or loses in-memory state, the token must survive.

- Always set TimeoutSeconds. Without it, the workflow waits indefinitely (up to the 1-year Standard workflow maximum) for a callback that may never come. A reasonable timeout with a Catch block that routes to escalation or notification is far better than an execution that hangs forever.

- Include context in the token delivery. The message sent to the external system should include not just the token but also what is being requested, why, and any data needed for the decision. The external system should not need to call back to Step Functions just to understand the request.

Observability

Step Functions has better built-in observability than most AWS services. The tooling is genuinely good, but you need to know what to look at and when.

CloudWatch Metrics

| Metric | Meaning | Alert Guidance |

|---|---|---|

| ExecutionsStarted | Number of executions started | Monitor for unexpected spikes or drops |

| ExecutionsSucceeded | Successful completions | Track success rate (Succeeded / Started) |

| ExecutionsFailed | Failed executions | Alert on any non-zero value for critical workflows |

| ExecutionsTimedOut | Executions that hit their timeout | Usually indicates a downstream problem |

| ExecutionsAborted | Manually or programmatically aborted | Track for unexpected aborts |

| ExecutionThrottled | Executions throttled by service quotas | Alert immediately: you are hitting limits |

| ExecutionTime | Duration from start to completion | Track P50, P95, P99 for SLA monitoring |

| LambdaFunctionsStarted | Lambda invocations from Step Functions | Correlate with Lambda concurrency |

| LambdaFunctionsTimedOut | Lambda timeouts within workflows | Lambda timeout mismatched with expectations |

| LambdaFunctionsFailed | Failed Lambda invocations | Identify unreliable functions |

| ServiceIntegrationsFailed | Non-Lambda integration failures | DynamoDB throttling, SQS errors, etc. |

Execution Event History

Standard workflow executions maintain a detailed, immutable event history. Every state entry, exit, task schedule, task start, task success, task failure, retry, and catch: each recorded as a distinct event. Queryable via GetExecutionHistory and visible in the console.

The execution event history caps at 25,000 events per execution. Hard limit. Each state transition generates multiple events (StateEntered, TaskScheduled, TaskStarted, TaskSucceeded/Failed, StateExited), so a simple Task state eats 5-6 events. Retries consume more. A Map state iterating over 100 items with 3 states per iteration and 5 events per state chews through roughly 1,500 events. Do the math before you ship.

X-Ray Tracing

Step Functions integrates with AWS X-Ray for distributed tracing across state machine executions and the services they invoke. When tracing is enabled:

- Each execution generates a trace showing time spent in each state

- Latency of each service integration call is visible

- Trace propagation into Lambda functions, DynamoDB, and other X-Ray-enabled services provides end-to-end visibility

- Error locations and durations are immediately apparent

When a workflow takes longer than expected, X-Ray traces immediately show whether the time is burning in Step Functions scheduler overhead, Lambda cold starts, DynamoDB throttling, or network latency. Enable X-Ray on both the state machine and every Lambda function it invokes. Tracing on only one side gives you half the picture, which is worse than useless because it misleads.

Step Functions Console

The visual execution inspector in the Step Functions console is, in my opinion, one of the best debugging tools anywhere in AWS. For each Standard workflow execution:

- The workflow graph shows each state colored by status (green for success, red for failure, blue for in-progress, gray for not yet reached)

- Clicking any state reveals its exact input, output, error details, and retry history

- The execution timeline shows wall-clock time spent in each state

- The event history provides a complete, chronological log of every transition

This inspector has saved me hours of log analysis more times than I can count. Customer reports a failed order. I look up the execution by ID, see which state failed, examine its input and error. Root cause identified in seconds.

CloudWatch Logs for Express Workflows

Since Express workflows do not have persistent execution history, CloudWatch Logs is the primary observability mechanism:

| Log Level | What Is Logged | Cost Impact |

|---|---|---|

| ALL | Every state transition, input, output, error | High (generates massive log volume for high-throughput workflows) |

| ERROR | Only failed executions | Moderate |

| FATAL | Only executions that fail due to runtime errors | Low |

| OFF | No logging | None |

I recommend ERROR for production Express workflows. ALL generates enormous volume at high throughput and can itself become a significant cost driver, sometimes exceeding the Step Functions execution cost. Use ALL only during development and targeted debugging.

Cost Analysis

Step Functions pricing between Standard and Express workflows can differ by two orders of magnitude. I have seen teams burn through five figures of monthly spend because they defaulted to Standard for a high-volume event processing pipeline.

Standard Workflow Pricing

Standard workflows are priced per state transition: $0.025 per 1,000 state transitions. The first 4,000 state transitions per month are free (permanent free tier).

A state transition is counted each time the execution enters a state. Retries count as additional state transitions. Each iteration of a Map state counts as state transitions for every state in the iterator.

| Workflow Scenario | States per Execution | Executions/Month | Monthly Cost |

|---|---|---|---|

| Simple 5-step pipeline | 5 | 10,000 | $1.25 |

| 20-step order processing | 20 | 100,000 | $50.00 |

| 50-step data pipeline | 50 | 50,000 | $62.50 |

| 10-step with inline Map (100 items, 5 states each) | 510 | 10,000 | $127.50 |

| 10-step at API scale | 10 | 10,000,000 | $2,500.00 |

The Map state cost trap is visible in the fourth example. A Map state iterating over 100 items with 5 states per iteration contributes 500 state transitions per execution. At volume, this dominates the cost.

Express Workflow Pricing

Express workflows are priced per request plus duration:

| Component | Price |

|---|---|

| Requests | $1.00 per 1,000,000 executions |

| Duration | $0.00001667 per GB-second (64 MB minimum billing increment) |

| Workflow Scenario | Duration | Memory | Executions/Month | Monthly Cost |

|---|---|---|---|---|

| 1-second microservice orchestration | 1s | 64 MB | 1,000,000 | ~$2.04 |

| 3-second data transformation | 3s | 64 MB | 1,000,000 | ~$4.13 |

| 200ms API composition | 200ms | 64 MB | 10,000,000 | ~$12.08 |

| 500ms event enrichment | 500ms | 64 MB | 100,000,000 | ~$153.50 |

Standard vs. Express Cost Comparison

| Scenario | Standard Cost | Express Cost | Cost Ratio |

|---|---|---|---|

| 1M executions/month, 10 states, 2s duration | $250 | ~$2.04 | 122x |

| 10M executions/month, 5 states, 500ms | $1,250 | ~$12 | 104x |

| 100K executions/month, 20 states, 30s | $50 | ~$8 | 6x |

| 10K executions/month, 50 states, 5 min | $12.50 | ~$5.50 | 2.3x |

The numbers speak for themselves. For high-volume, short-duration workflows, Express is cheaper by 100x. Standard narrows the gap at low volume with many states, where per-transition cost becomes a smaller fraction of total infrastructure spend.

Cost Optimization Strategies

| Strategy | Impact | When to Apply |

|---|---|---|

| Use Express for high-volume, short workflows | 10-250x cost reduction vs Standard | Workflows under 5 min with idempotent operations |

| Batch items in Distributed Map | Reduces child execution count proportionally | Processing large item sets (batch 100 items = 100x fewer children) |

| Use direct service integrations | Eliminates Lambda invocation cost per step | Simple DynamoDB reads/writes, SQS sends, SNS publishes |

| Combine Pass states | Fewer state transitions | Multiple consecutive data transformations |

| Use intrinsic functions | Eliminates Lambda for data transformation | String formatting, array operations, JSON manipulation |

| Use Express children in Distributed Map | 10-50x cheaper than Standard children | Short-lived, idempotent processing tasks |

| Nest state machines | No cost reduction, but manages complexity | Break monolithic workflows into composable units |

| Set explicit timeouts | Prevents runaway cost from stuck executions | Every Task state, every state machine |

Common Failure Modes

State Machine Definition Size Limit (1 MB)

State machine definitions are limited to 1 MB. Sounds generous. Then you build a deeply nested Distributed Map with complex child workflows, extensive error handling on every state, and detailed Parameters blocks. Suddenly you are at 800 KB and adding one more branch pushes you over.

Mitigation: Extract child workflows into separate state machines and invoke them via nested execution. This also improves maintainability and testability. Nobody wants to review a 500-line monolithic ASL definition.

Execution History Limit (25,000 Events)

Each Standard workflow execution is limited to 25,000 history events. Exceeding this limit causes the execution to fail with a States.Runtime error. A simple Task state consumes approximately 5 events. An inline Map with 1,000 iterations and 3 states per iteration consumes approximately 15,000 events, more than half the budget.

Mitigation: Use Distributed Map instead of inline Map for collections larger than a few dozen items. Use Express sub-workflows for high-iteration processing. Use the "continue-as-new" pattern for long-running workflows: start a new execution with the current state as input before approaching the limit.

Payload Size Limit (256 KB)

The maximum payload between states is 256 KB. State input, state output, everything passed between states. Workflows that accumulate results (Map outputs growing with each iteration, Parallel branches aggregating) slam into this limit faster than anyone expects.

Mitigation: Store large data in S3 or DynamoDB and pass only references (bucket/key, table/key) between states. Use ResultSelector to trim verbose service responses. For Distributed Map, always configure ResultWriter to write outputs to S3 rather than aggregating them in the parent execution.

Express Workflow Duration Limit (5 Minutes)

Express workflows fail immediately if they exceed 5 minutes. Hard constraint. No override. No exception. No amount of support tickets will change it.

Mitigation: If your workflow occasionally exceeds 5 minutes due to variable processing times, use Standard. If only specific branches exceed 5 minutes, use a Standard parent that invokes Express children for the fast paths.

State Transition Throttling

Standard workflows have a default limit of 4,000 state transitions per second per account per region. High-volume Standard workflows with many states per execution can hit this limit, causing ExecutionThrottled events.

Mitigation: Request a limit increase proactively through AWS Support. Use Express workflows for high-throughput use cases. Monitor the ExecutionThrottled metric and alert on any non-zero value.

Lambda Cold Start Accumulation

Step Functions does not pre-warm Lambda functions. Every Lambda invocation faces standard cold start behavior. Ten sequential Lambda Task states in a workflow? That is 1-5 seconds of cumulative cold start latency before your business logic even runs.

Mitigation: Use provisioned concurrency on Lambda functions invoked by latency-sensitive workflows. Use direct service integrations (DynamoDB, SQS) instead of Lambda for operations that do not require compute logic. Direct integrations have no cold start.

IAM Permission Errors

Step Functions executes service integrations using an IAM execution role. If the role lacks permissions, you get a generic States.TaskFailed rather than a clear "access denied." With SDK integrations, where the required IAM actions are not always obvious, this leads to some frustrating debugging sessions.

Mitigation: Use the least-privilege IAM policy generated by CDK or the Step Functions console as a starting point. Test new integrations with verbose logging enabled. Use CloudTrail to identify the specific API calls being denied.

Patterns

Saga Pattern for Distributed Transactions

The saga pattern is the Step Functions pattern I deploy most in production. It implements distributed transactions across multiple services by defining compensating actions for each step. If step N fails, the workflow executes compensating actions for steps N-1 through 1 (in reverse order) to roll back the partial transaction.

Implementation in Step Functions:

- Each forward step is a Task state (reserve inventory, charge payment, create shipment)

- Each Task state has a Catch block that routes to a compensation chain

- The compensation chain executes compensating actions in reverse order (cancel shipment, refund payment, release inventory)

ResultPathpreserves context so that compensating actions know what to undo

Distributed two-phase commit does not work reliably in a microservices architecture. Sagas replace it with a choreographed sequence of local transactions and compensations. Step Functions provides exactly the primitives the saga pattern requires: retry, catch, and state persistence. I have yet to find a better implementation platform for this pattern.

Human-in-the-Loop

The .waitForTaskToken pattern enables human approval workflows:

- A Task state sends a notification (email, Slack message, web dashboard) containing the task token

- The workflow pauses, consuming no compute resources

- A human reviews the request and approves or rejects

- The approval application calls

SendTaskSuccess(approve) orSendTaskFailure(reject) with the token - The workflow resumes and routes based on the decision via a Choice state

I use this pattern for expense approvals, deployment gates, content review, compliance sign-offs. Any process requiring human judgment mid-workflow. Set HeartbeatSeconds or TimeoutSeconds to implement approval deadlines: auto-escalate or auto-reject if nobody responds within 24 hours.

Fan-Out / Fan-In

Use Parallel for a fixed set of independent tasks or Map for dynamic collections:

- A preparatory state generates or provides the work items

- Parallel or Map fans out to process items concurrently (with

MaxConcurrencyfor Map) - Results are collected as an array

- A post-processing state aggregates or merges the results

For large-scale fan-out (thousands to millions of items), use Distributed Map with S3 as both the item source and result destination.

Circuit Breaker

Protect downstream services from cascading failures:

- Before calling the service, read circuit state from DynamoDB (Task + Choice states)

- If circuit is "open" (too many recent failures), skip the call and return a fallback response

- If circuit is "closed," invoke the service

- On failure, increment the failure counter in DynamoDB; if threshold exceeded, set circuit to "open" with a TTL

- DynamoDB TTL automatically "closes" the circuit after the cooldown period

This pattern prevents a failing downstream service from consuming all your Step Functions execution capacity and Lambda concurrency with retries that will not succeed.

Polling Pattern

For services that do not support the .sync integration:

- A Task state starts the asynchronous operation (returns a job ID)

- A Wait state pauses for an interval (10-60 seconds)

- A Task state checks the operation status using the job ID

- A Choice state evaluates: if complete, proceed; if still running, loop back to Wait; if failed, route to error handling

Include a maximum iteration counter tracked via ResultPath to prevent infinite loops. When the counter exceeds a threshold, the Choice state routes to a failure or timeout-handling state rather than looping indefinitely.

Step Functions + EventBridge

Event-driven orchestration combining both services:

- EventBridge rules trigger Step Functions executions based on events (S3 object created, custom application events, scheduled rules)

- Step Functions orchestrates the complex response logic

- Step Functions publishes execution status change events back to EventBridge automatically

- Downstream systems react to workflow outcomes via additional EventBridge rules

The result is a loosely coupled architecture: Step Functions handles stateful orchestration, EventBridge handles event routing and fan-out. Each does what it does best.

Key Architectural Patterns Summary

After years of running Step Functions in production, these are the patterns and principles I keep coming back to:

- Choose Standard vs. Express based on execution semantics, not just cost. Standard gives you exactly-once, durable execution with full history and redrive. Express gives you throughput and low cost for ephemeral processing. The architectural differences matter more than the pricing differences. But the pricing differences will bankrupt a project if you choose wrong.

- Use direct service integrations instead of Lambda wrappers. If a Task state exists solely to call DynamoDB PutItem, SQS SendMessage, or SNS Publish, replace the Lambda with a direct integration. No cold starts. Lower cost. One fewer deployment artifact to maintain.

- Respect the 256 KB payload limit from day one. Pass references (S3 keys, DynamoDB keys) between states, not full payloads. This is the single most common source of production failures in Step Functions workflows, and retrofitting a workflow to use references instead of inline data is painful.

- Set explicit timeouts on every Task state. The default timeout for a Standard workflow is 1 year. A Task state with no timeout that calls a hung service will keep the execution alive (and potentially accumulate cost) for up to a year before failing.

- Use Distributed Map for any iteration over more than a few dozen items. Inline Map is limited to 40 concurrency and shares the parent execution's 25,000-event budget. Distributed Map scales to 10,000 concurrent executions with independent event histories.

- Implement Retry with jitter and Catch on every Task state. Transient failures are the norm in distributed systems. Retry with exponential backoff and full jitter is the correct default. Catch blocks with ResultPath preserve context for error handling.

- Store task tokens durably for callback patterns. If your callback application loses the task token, the workflow hangs until timeout. Persist tokens in DynamoDB with a TTL matching the task timeout.

- Keep business logic in Lambda, orchestration logic in ASL. ASL is a coordination language, not a computation language. Complex business rules implemented in Choice states and intrinsic functions are impossible to unit test and opaque to anyone who did not write them.

- Monitor execution throttling and costs proactively. Request limit increases before you need them. Set CloudWatch alarms on

ExecutionThrottledand on billing metrics. A workflow that costs $10/month during development will surprise you at $10,000/month when production traffic hits.

Additional Resources

- AWS Step Functions Developer Guide: comprehensive reference for all ASL syntax, service integrations, API operations, and configuration options

- Amazon States Language specification: formal definition of state machine syntax including all state types, error handling, and data flow processing

- AWS Step Functions Workflow Studio documentation: visual designer for building, modifying, and debugging state machine definitions

- AWS Step Functions best practices guide: AWS-published guidance on workflow design, error handling, performance, and cost optimization

- AWS Step Functions quotas and service limits: current limits including execution history size, payload size, API throttling rates, and account-level maximums

- AWS Step Functions pricing page: full pricing breakdown for Standard and Express workflows across all regions

- AWS Prescriptive Guidance, Saga pattern with Step Functions: detailed implementation guide for distributed transactions using compensating actions

- Serverless Land Step Functions patterns collection: community-contributed workflow patterns with deployable SAM and CDK examples

- AWS Architecture Blog Step Functions posts: real-world architecture case studies and patterns from AWS Solutions Architects

- AWS Well-Architected Serverless Applications Lens: comprehensive guidance for serverless application design including orchestration best practices

- AWS Step Functions Workshop: hands-on exercises progressing from core concepts through Distributed Map, callbacks, and advanced error handling