About the author: I'm Charles Sieg, a cloud architect and platform engineer who builds apps, services, and infrastructure for Fortune 1000 clients through Vantalect. If your organization is rethinking its software strategy in the age of AI-assisted engineering, let's talk.

Most teams bolt messaging onto their architecture after the first production outage caused by synchronous service-to-service calls. A payment service calls an inventory service directly, the inventory service is slow, the payment service times out, the customer gets charged twice. Suddenly everyone agrees the system needs a queue. I have spent years designing event-driven systems on AWS: order processing pipelines handling millions of transactions per day, IoT telemetry ingestion at hundreds of thousands of events per second, multi-region fan-out architectures coordinating dozens of microservices. AWS offers at least six distinct messaging and eventing services. Each solves a different problem. Choosing wrong means either overengineering a simple notification flow or discovering at 3 AM that your architecture cannot handle the throughput your business requires. This article is not a getting-started guide. It is an architecture reference for engineers who need to pick the right service, configure it correctly, and avoid the failure modes that surface only under production load.

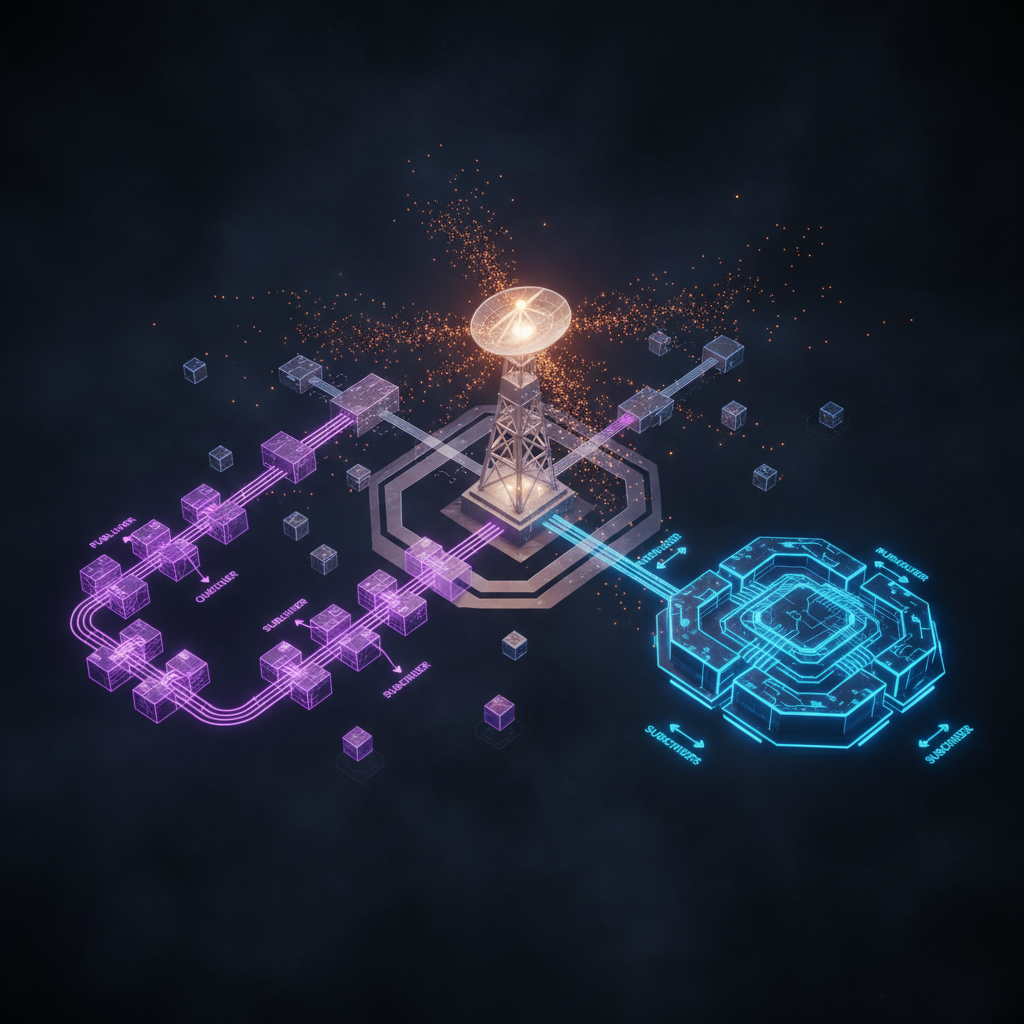

The AWS Messaging Landscape

AWS provides multiple messaging services. They overlap in confusing ways. The marketing materials make them sound interchangeable. They are not. Each service occupies a specific architectural niche based on its delivery model, ordering guarantees, throughput characteristics, and integration surface.

| Service | Model | Core Use Case | Delivery Guarantee |

|---|---|---|---|

| SNS | Pub/sub fan-out | One message to many subscribers | At-least-once |

| SQS | Point-to-point queue | Decouple producer from consumer, buffer workloads | At-least-once (Standard) / Exactly-once (FIFO) |

| EventBridge | Event bus with content-based routing | Event-driven architectures with filtering and transformation | At-least-once |

| Amazon MQ | Managed message broker (ActiveMQ, RabbitMQ) | Lift-and-shift from on-premises brokers | Depends on protocol (JMS, AMQP, MQTT) |

| Amazon MSK | Managed Apache Kafka | High-throughput streaming with consumer groups and replay | At-least-once / Exactly-once (idempotent producers) |

| Kinesis Data Streams | Managed streaming with shards | Real-time analytics, log aggregation, ordered event processing | At-least-once |

Three of these (SNS, SQS, EventBridge) are fully serverless: no capacity planning, no servers to manage, pay-per-message pricing. Two (Amazon MQ, MSK) are managed but broker-based: you provision capacity, choose instance sizes, manage storage. Kinesis sits between the two camps: serverless-ish with on-demand mode, but shard management in provisioned mode.

The fully serverless trio (SNS + SQS + EventBridge) handles 80% of event-driven use cases in my experience. Amazon MQ exists for teams migrating from on-premises ActiveMQ or RabbitMQ deployments. MSK and Kinesis serve high-throughput streaming scenarios where ordered replay and consumer group semantics matter.

Amazon SNS: Pub/Sub Fan-Out

SNS (Simple Notification Service) is a fully managed publish/subscribe messaging service. Publishers send messages to topics. Subscribers receive copies of those messages. The publisher does not know (and does not care) who the subscribers are or how many exist. That decoupling is the entire point.

How SNS Works Internally

An SNS topic is a logical access point. When a publisher sends a message to a topic, the SNS service delivers a copy of that message to every subscription attached to that topic. Delivery happens in parallel across all subscribers. The publisher receives a successful response as soon as SNS accepts the message. Delivery to subscribers happens asynchronously after that.

SNS supports multiple subscription protocols:

| Protocol | Endpoint | Use Case |

|---|---|---|

| SQS | SQS queue ARN | Durable fan-out to queue-based consumers |

| Lambda | Lambda function ARN | Serverless event processing |

| HTTP/HTTPS | Webhook URL | External system integration |

| Email address | Human notifications (not for programmatic use) | |

| SMS | Phone number | Mobile alerts |

| Kinesis Data Firehose | Firehose delivery stream ARN | Archiving messages to S3, Redshift, OpenSearch |

| Platform application | Mobile push endpoint | iOS/Android push notifications |

The most important pattern in production: SNS + SQS fan-out. Every SQS subscription gets its own copy of every message. If the SQS consumer is slow or temporarily down, messages queue up. No message loss. No backpressure on the publisher or other subscribers. This pattern is the backbone of most event-driven architectures I build.

Standard vs. FIFO Topics

SNS offers two topic types. The naming mirrors SQS (Standard and FIFO) but the implications differ.

| Characteristic | Standard Topics | FIFO Topics |

|---|---|---|

| Message ordering | Best-effort | Strict ordering within message group |

| Deduplication | None (duplicates possible) | Content-based or explicit dedup ID |

| Throughput | Nearly unlimited | 300 messages/sec (or 10 MB/sec with batching) |

| Supported subscribers | All protocols | SQS FIFO queues only |

| Pricing | $0.50 per 1M requests | $0.50 per 1M requests |

Standard topics handle the vast majority of use cases. The throughput is effectively unlimited for most workloads. FIFO topics exist for scenarios requiring strict ordering (financial transactions, inventory updates) but carry a severe constraint: only SQS FIFO queues can subscribe. No Lambda. No HTTP. No email. This limitation shapes architecture decisions. If you need ordered delivery to a Lambda function, the pattern is SNS FIFO topic to SQS FIFO queue to Lambda.

Message Filtering

SNS subscription filters let subscribers receive only messages matching specific criteria. Without filters, every subscriber gets every message on the topic. With filters, each subscription includes a filter policy that evaluates message attributes. Only matching messages get delivered.

Filter policies support string matching, numeric matching, prefix matching, anything-but matching, and existence checks on message attributes. You can also apply filters to the message body (JSON payloads) rather than message attributes.

This matters architecturally because it eliminates the "filter on the consumer side" pattern. Without subscription filters, you publish all order events to a topic, every subscriber receives every event, and each subscriber throws away the events it does not care about. With subscription filters, the shipping service subscribes with a filter for event_type = order_shipped and the billing service subscribes with a filter for event_type = payment_confirmed. Each service receives only its relevant messages. SNS does the filtering server-side before delivery.

Filter policies evaluate against message attributes or message body content:

{

"event_type": ["order_shipped"],

"region": [{"prefix": "us-"}],

"order_value": [{"numeric": [">=", 100]}]

}

Delivery Reliability and Dead-Letter Queues

SNS delivers messages with at-least-once semantics. For most subscription protocols, SNS retries failed deliveries according to a protocol-specific retry policy.

| Protocol | Retry Behavior | Duration |

|---|---|---|

| SQS, Lambda, Firehose | Immediate retries, then backoff | Up to 100,015 total attempts over 23 days |

| HTTP/HTTPS | 3 immediate, then exponential backoff | Up to 100,015 attempts over 23 days (configurable) |

| Email, SMS | No retries | Single attempt |

For Lambda and SQS subscriptions, configure a dead-letter queue (DLQ). Messages that exhaust all retry attempts land in the DLQ for inspection. Without a DLQ, failed messages silently disappear. I have been burned by this more than once.

SNS Sizing and Cost

SNS pricing is straightforward:

| Component | Cost |

|---|---|

| First 1M requests/month | Free |

| Subsequent requests | $0.50 per 1M |

| Message delivery to SQS | Free |

| Message delivery to Lambda | Free |

| Message delivery to HTTP | $0.60 per 1M |

| Message delivery to Email | $2.00 per 100K |

| Message delivery to SMS | Varies by country ($0.00645/msg US) |

| Data transfer | $0.09/GB after first 1 GB |

For a system publishing 10 million messages/day to a topic with 3 SQS subscribers: roughly $5/day for publishes, $0 for SQS deliveries. SNS is one of the cheapest AWS services when used with SQS and Lambda subscribers.

SNS Failure Modes

Message attribute limits. SNS messages can carry up to 10 message attributes. The total message size (body + attributes) cannot exceed 256 KB. Teams that try to pack routing metadata into attributes run into these limits fast.

HTTP endpoint timeouts. SNS gives HTTP endpoints 15 seconds to respond with a 2xx status code. Slow endpoints cause retries, which cause duplicate processing, which causes data inconsistencies. If your HTTP subscriber needs more than a few seconds, put an SQS queue between SNS and the HTTP consumer.

Cross-account permissions. SNS topics can accept messages from and deliver to resources in other AWS accounts. Getting the resource policies right is tricky. The topic policy must allow the publisher's account to sns:Publish. The subscriber's SQS queue policy must allow the SNS topic to sqs:SendMessage. Miss either one and messages fail silently.

Amazon SQS: Message Queuing

SQS (Simple Queue Service) is the oldest messaging service in AWS. Launched in 2004, it predates most of the AWS portfolio. The concept is simple: producers put messages on a queue, consumers pull messages off the queue. The queue provides durability, buffering, and decoupling between producer and consumer.

Standard vs. FIFO Queues

SQS offers two queue types. The trade-offs are significant.

| Characteristic | Standard Queue | FIFO Queue |

|---|---|---|

| Throughput | Nearly unlimited | 300 msg/sec (3,000 with batching, 70,000 with high-throughput mode) |

| Ordering | Best-effort | Strict FIFO within message group |

| Delivery | At-least-once (duplicates possible) | Exactly-once processing |

| Deduplication | None | Content-based or explicit dedup ID (5-minute window) |

| Naming | Any valid name | Must end in .fifo |

Standard queues are the default choice. Nearly unlimited throughput, no configuration needed, simple programming model. The trade-off: messages might arrive out of order and might be delivered more than once. Both are rare in practice (I have measured duplicate rates below 0.01% in high-volume systems) but your consumer must be idempotent.

FIFO queues guarantee ordering and exactly-once delivery within a message group. A message group is identified by a MessageGroupId on each message. All messages with the same group ID arrive in order. Messages in different groups have no ordering relationship and can be processed in parallel.

The throughput ceiling on FIFO queues (300 msg/sec base, up to 70,000/sec with high-throughput mode) makes them unsuitable for high-volume event processing. High-throughput FIFO mode increases the limit dramatically but requires careful message group design. If all your messages share one group ID, you get sequential processing at 300/sec no matter what.

Visibility Timeout and Message Lifecycle

When a consumer reads a message from SQS, the message becomes invisible to other consumers for a configurable period called the visibility timeout. The consumer processes the message and then explicitly deletes it. If the consumer crashes or fails to delete the message before the visibility timeout expires, the message reappears in the queue for another consumer to pick up.

This mechanism is the core reliability guarantee of SQS. Messages persist until explicitly deleted. A failed consumer does not cause message loss.

Default visibility timeout: 30 seconds. Maximum: 12 hours. Getting this value right matters. Set it too short and a slow consumer will see the message get redelivered to another consumer while it is still processing (duplicate processing). Set it too long and a crashed consumer's messages sit invisible for hours before retrying.

Best practice: set visibility timeout to 6x your average processing time. If your consumer typically processes a message in 5 seconds, set timeout to 30 seconds. If processing time varies wildly, use the ChangeMessageVisibility API to extend the timeout as needed during processing.

Long Polling vs. Short Polling

SQS supports two polling modes. Short polling returns immediately, even if no messages are available. Long polling waits up to 20 seconds for a message to arrive before returning empty.

Always use long polling. Set WaitTimeSeconds to 20. Short polling burns API calls (and money) checking empty queues. A queue consumer polling every second with short polling makes 86,400 API calls per day. With long polling at 20 seconds, the same consumer makes 4,320 calls per day. The difference compounds across dozens of queues.

Dead-Letter Queues and Redrive

SQS dead-letter queues (DLQs) catch messages that fail processing repeatedly. Configure a redrive policy on the source queue: after N receive attempts, move the message to the DLQ. N is configurable via maxReceiveCount.

DLQ redrive (introduced 2021) lets you move messages from the DLQ back to the source queue for reprocessing. Either move all messages or filter by specific criteria. This is essential for production operations. The workflow: message fails, lands in DLQ, engineer investigates and fixes the bug, engineer redrives the DLQ messages back through the fixed consumer.

A common mistake: forgetting to set up a DLQ. Without one, messages that fail maxReceiveCount times silently disappear. The ApproximateNumberOfMessagesNotVisible metric climbs, the ApproximateAgeOfOldestMessage metric spikes, and nobody knows why messages are vanishing.

Another common mistake: using the wrong queue type for the DLQ. A Standard queue's DLQ must be a Standard queue. A FIFO queue's DLQ must be a FIFO queue.

Lambda Integration with SQS

Lambda's SQS event source mapping is the most common way to consume SQS messages in a serverless architecture. Lambda polls the queue, retrieves batches of messages, and invokes your function with the batch. You do not manage pollers, threads, or scaling.

Key configuration parameters:

| Parameter | Default | Range | Recommendation |

|---|---|---|---|

| BatchSize | 10 | 1-10,000 | Start with 10, increase for throughput-sensitive workloads |

| MaximumBatchingWindowSeconds | 0 | 0-300 | 5 seconds for cost optimization, 0 for latency |

| FunctionResponseTypes | None | ReportBatchItemFailures | Always enable this |

| MaximumConcurrency | 1,000 | 2-1,000 | Set based on downstream capacity |

Always enable ReportBatchItemFailures. Without it, a single failed message in a batch causes the entire batch to retry. Nine successful messages get reprocessed because one failed. With ReportBatchItemFailures, your function returns a list of failed message IDs, and Lambda only retries those specific messages.

Set MaximumConcurrency thoughtfully. Lambda scales SQS consumers aggressively: it adds 60 concurrent invocations per minute until it hits your account's concurrency limit or MaximumConcurrency. If your SQS consumer writes to a database, uncontrolled scaling can overwhelm the database. Set MaximumConcurrency to match your downstream capacity.

SQS Sizing and Cost

| Component | Standard | FIFO |

|---|---|---|

| First 1M requests/month | Free | Free |

| Subsequent requests | $0.40 per 1M | $0.50 per 1M |

| Data transfer (in) | Free | Free |

| Data transfer (out) | $0.09/GB after 1 GB | $0.09/GB after 1 GB |

A "request" is a single API call: SendMessage, ReceiveMessage, DeleteMessage. A batch of 10 messages in SendMessageBatch counts as 1 request. Always use batch APIs for cost efficiency. Processing 100 million messages/day costs roughly $12/day with batching vs. $40/day without.

SQS Failure Modes

Poison messages. A malformed message that causes every consumer to crash creates an infinite retry loop. Every consumer receives the message, crashes, the message reappears, another consumer picks it up, crashes. The DLQ is your safety net. Set maxReceiveCount to 3 or 5.

Backpressure mismanagement. SQS queues have no maximum depth. Messages accumulate indefinitely. This is a feature (buffering) and a risk (unbounded backlogs). Monitor ApproximateNumberOfMessagesVisible and alert when it exceeds expected levels. A queue backing up to millions of messages usually means a consumer is broken or undersized.

Message size limits. SQS messages cannot exceed 256 KB. For larger payloads, the standard pattern is "claim check": store the payload in S3 and put the S3 reference in the SQS message. The AWS SQS Extended Client Library automates this pattern.

FIFO throttling. FIFO queues in standard throughput mode throttle at 300 msg/sec per message group. If all messages share one group ID, your entire queue is limited to 300 msg/sec. Design message groups around the natural ordering boundary (order ID, customer ID, device ID) and you get parallel processing across groups.

Amazon EventBridge: Event Routing and Transformation

EventBridge is a serverless event bus. Events flow in from AWS services, SaaS applications, or custom applications. Rules match events by content and route them to targets. EventBridge is newer than SNS and SQS (launched 2019) and occupies a different niche: content-based routing with transformation capabilities.

EventBridge vs. SNS

This comparison causes the most confusion. Both services deliver events to multiple consumers. The differences determine when to use which.

| Dimension | EventBridge | SNS |

|---|---|---|

| Routing model | Content-based (event pattern matching) | Topic-based (subscribe to topic) |

| Event filtering | Rich JSON pattern matching on any field | Message attributes or body filtering |

| Event transformation | Input transformers modify event before delivery | None (consumers receive raw message) |

| Schema | Schema registry with discovery and code generation | None |

| Throughput | Soft limit of 10,000 events/sec per bus (default) | Nearly unlimited |

| Targets | 28+ AWS service targets | 7 subscription protocols |

| Cross-account | Event bus to event bus | Topic to queue/function |

| Third-party integration | 100+ SaaS partner event sources | None |

| Archive and replay | Built-in event archiving and replay | None |

| Pricing | $1.00 per 1M events published | $0.50 per 1M requests |

Use SNS when you need simple pub/sub fan-out with maximum throughput. A service publishes an event, N subscribers receive it. No complex routing logic. No event transformation. No need for archive/replay. SNS is cheaper and faster.

Use EventBridge when you need content-based routing (different events to different targets based on event content), event transformation (reshaping events before delivery), third-party SaaS integration, event archiving/replay, or schema management. EventBridge is the more capable service but costs twice as much and has lower default throughput.

In practice, I use both in the same architecture. SNS for high-volume internal fan-out (order events, inventory updates). EventBridge for cross-domain event routing (microservice integration), third-party webhooks, and any flow requiring transformation or archiving.

Event Buses, Rules, and Targets

EventBridge has three core concepts:

Event bus. A named channel that receives events. Every AWS account has a default event bus that receives events from AWS services automatically (EC2 state changes, CodePipeline stage transitions, S3 object notifications). You can create custom event buses for your own application events. You can also receive events from SaaS partners (Shopify, Zendesk, Auth0) on dedicated partner event buses.

Rules. Pattern-matching definitions that evaluate every event on a bus. A rule specifies an event pattern and one or more targets. When an event matches the pattern, EventBridge invokes all targets associated with that rule. Each event bus supports up to 300 rules (soft limit, can be increased).

Targets. AWS resources that receive matched events. A single rule can have up to 5 targets. Supported targets include Lambda, SQS, SNS, Step Functions, Kinesis Data Streams, Kinesis Data Firehose, ECS tasks, CodePipeline, API Gateway, CloudWatch Logs, Redshift, SageMaker, and more.

Event Pattern Matching

EventBridge patterns match against the JSON structure of events. The pattern language supports:

| Match Type | Example | Matches |

|---|---|---|

| Exact value | {"source": ["my.app"]} | Events where source equals "my.app" |

| Prefix | {"source": [{"prefix": "my."}]} | Events where source starts with "my." |

| Anything-but | {"source": [{"anything-but": "test"}]} | Events where source is not "test" |

| Numeric range | {"detail": {"price": [{"numeric": [">", 100]}]}} | Events where price > 100 |

| Exists | {"detail": {"error": [{"exists": true}]}} | Events containing an error field |

| Wildcard | {"detail": {"key": [{"wildcard": "order-*"}]}} | Events matching glob pattern |

Combine patterns with implicit AND logic (all conditions must match) or use $or for OR logic. Pattern evaluation happens server-side. Unmatched events silently drop (no DLQ by default). Configure a dead-letter queue on rules to capture delivery failures.

Input Transformation

EventBridge can reshape events before delivering them to targets. This eliminates the need for "adapter" Lambda functions that exist solely to transform event formats.

An input transformer has two parts: input paths extract values from the event, and an input template builds the target payload using those values.

{

"InputPathsMap": {

"orderId": "$.detail.order_id",

"customer": "$.detail.customer_name",

"total": "$.detail.total_amount"

},

"InputTemplate": "{\"order\": <orderId>, \"customer\": <customer>, \"amount\": <total>}"

}

This means the target receives a clean, minimal payload regardless of the source event's structure. Different rules on the same bus can transform the same event differently for different targets. The shipping service gets order ID and address. The billing service gets order ID and payment method. The analytics service gets everything. All from one event, zero Lambda functions for glue logic.

EventBridge Pipes

EventBridge Pipes (launched 2022) connect sources to targets with optional filtering, enrichment, and transformation in between. Think of it as a managed integration pipeline.

A pipe has four stages:

- Source. Reads events from SQS, Kinesis, DynamoDB Streams, Amazon MQ, or Kafka.

- Filtering. Applies EventBridge-style pattern matching to discard unwanted events.

- Enrichment. Optionally calls Lambda, Step Functions, API Gateway, or API Destinations to augment the event.

- Target. Delivers the (filtered, enriched, transformed) event to any EventBridge target.

Pipes replace glue Lambda functions that poll a source, filter, enrich, and forward. I have replaced dozens of "SQS to Lambda to EventBridge" patterns with a single Pipe definition.

EventBridge Scheduler

EventBridge Scheduler (launched 2022) is a fully managed task scheduler. It replaced the older EventBridge scheduled rules for one-time and recurring scheduled invocations.

| Feature | Scheduled Rules | EventBridge Scheduler |

|---|---|---|

| One-time schedules | No | Yes |

| Timezone support | No (UTC only) | Yes |

| Maximum schedules | 300 per bus | 1 million per account |

| Flexible time windows | No | Yes (1-15 minute window) |

| Retry policy | None | Configurable (up to 185 retries over 24 hours) |

| Dead-letter queue | No | Yes |

| Pricing | Free (part of EventBridge) | Free (first 14M invocations/month) |

Use Scheduler over scheduled rules for everything. Timezone support alone justifies the switch. The 1-million-schedule limit enables patterns like per-customer reminder schedules or per-order timeout timers that would be impossible with 300-rule-limited scheduled rules.

EventBridge Sizing and Cost

| Component | Cost |

|---|---|

| Custom events published | $1.00 per 1M |

| AWS service events | Free |

| Partner events | Varies by partner |

| Schema discovery | Free |

| Event archive storage | $0.023/GB (same as S3 Standard) |

| Event replay | $0.10 per 1M events replayed |

| Pipes | $0.40/1M requests + enrichment costs |

| Scheduler | Free (first 14M invocations/month) |

EventBridge costs more than SNS for high-volume fan-out. At 100 million custom events/day, EventBridge costs $100/day vs. $50/day for SNS. The difference buys you content-based routing, transformation, archiving, and replay. Worth it for cross-domain event routing. Overkill for simple pub/sub.

EventBridge Failure Modes

Throughput limits. The default soft limit of 10,000 events/sec per bus catches teams off guard during traffic spikes. Request a limit increase well before launch. I routinely request 50,000-100,000 events/sec for production buses.

Rule evaluation order. Rules on a bus evaluate independently and in no guaranteed order. If you need sequential processing, EventBridge is the wrong tool. Use SQS FIFO or Step Functions.

Target delivery failures. When a target fails (Lambda throttled, SQS permission denied), EventBridge retries for up to 24 hours with exponential backoff. Without a DLQ on the rule, exhausted retries result in silent message loss. Always configure DLQs on EventBridge rules.

Input transformer escaping. JSON escaping in input templates is brittle. Strings containing quotes, special characters, or nested JSON break input transformers in non-obvious ways. Test transformers with production-realistic payloads, not sanitized examples.

Amazon MQ: Managed Message Brokers

Amazon MQ is a managed service for Apache ActiveMQ and RabbitMQ. It exists for a specific audience: teams migrating from on-premises message brokers who need protocol compatibility (JMS, AMQP 0-9-1, AMQP 1.0, MQTT, OpenWire, STOMP) with existing client applications.

When to Use Amazon MQ

If you are building a new application on AWS with no existing broker dependencies, do not use Amazon MQ. Use SNS, SQS, or EventBridge. They are serverless, cheaper, and require zero operational overhead.

Amazon MQ makes sense in exactly these scenarios:

- Migrating from on-premises ActiveMQ or RabbitMQ. Existing applications use JMS or AMQP clients. Rewriting those clients for SNS/SQS would take months. Amazon MQ provides a drop-in replacement with managed infrastructure.

- Protocol requirements. The application requires MQTT (IoT devices), STOMP (web clients), or AMQP 1.0 (enterprise messaging). SNS/SQS only support their own APIs.

- Complex routing topologies. RabbitMQ exchanges (topic, fanout, headers, direct) or ActiveMQ composite destinations provide routing capabilities that differ from SNS/EventBridge patterns.

Broker Types and Sizing

Amazon MQ for ActiveMQ offers single-instance and active/standby deployment modes. Amazon MQ for RabbitMQ offers single-instance and cluster deployment modes (3-node clusters for high availability).

| Instance Type | vCPUs | Memory | Network | Use Case |

|---|---|---|---|---|

| mq.t3.micro | 2 | 1 GB | Low-moderate | Development, testing |

| mq.m5.large | 2 | 8 GB | Up to 10 Gbps | Light production |

| mq.m5.xlarge | 4 | 16 GB | Up to 10 Gbps | Medium production |

| mq.m5.2xlarge | 8 | 32 GB | Up to 10 Gbps | Heavy production |

| mq.m5.4xlarge | 16 | 64 GB | Up to 10 Gbps | High throughput |

Active/standby ActiveMQ brokers provide automatic failover. The standby instance sits idle, replicating storage from the active. Failover takes 1-2 minutes. During failover, clients using the failover transport reconnect automatically. That 1-2 minute window is unavoidable downtime. Plan for it.

RabbitMQ clusters distribute queues across 3 nodes. Classic mirrored queues replicate messages to all nodes. Quorum queues (the modern replacement) use Raft consensus for safer replication. Always use quorum queues for new RabbitMQ deployments.

Amazon MQ Costs

Amazon MQ pricing is instance-hour based, similar to EC2:

| Component | Cost (us-east-1) |

|---|---|

| mq.t3.micro (single) | $0.036/hr (~$26/month) |

| mq.m5.large (single) | $0.302/hr (~$217/month) |

| mq.m5.large (active/standby) | $0.604/hr (~$435/month) |

| Storage (ActiveMQ) | $0.10/GB/month |

| Storage (RabbitMQ) | $0.10/GB/month |

| Data transfer | Standard AWS rates |

Compare this to SQS at $0.40 per million messages. At 1 million messages/day, SQS costs ~$12/month. Amazon MQ costs $217/month minimum. The break-even point is roughly 500 million messages/month, and even then SQS wins because it requires zero operational effort.

Amazon MSK: Managed Apache Kafka

Amazon MSK (Managed Streaming for Apache Kafka) runs Apache Kafka clusters on AWS-managed infrastructure. Kafka occupies a fundamentally different architectural niche from SNS, SQS, and EventBridge. It is a distributed commit log with consumer group semantics, ordered replay, and configurable retention.

When Kafka Beats SQS/SNS

Kafka shines where SQS/SNS struggle:

Ordered replay. Kafka retains messages for a configurable period (default 7 days, up to infinite). Any consumer can replay the log from any point. SQS deletes messages after successful processing. If you need to reprocess last week's events with a new consumer, Kafka supports that natively. SQS does not.

Consumer groups. Multiple independent consumer groups can read the same topic at their own pace. Consumer group A might be real-time analytics processing events as they arrive. Consumer group B might be a batch job reading events from yesterday. Both read from the same topic without interference. In SNS/SQS, you would need an SNS topic fanning out to separate SQS queues per consumer.

High-throughput ordered streaming. Kafka partitions provide ordered processing at scale. A topic with 100 partitions supports 100 parallel consumers, each processing an ordered slice of the data. SQS FIFO queues max out at 70,000 msg/sec with high-throughput mode and require careful message group design.

Exactly-once semantics. Kafka supports exactly-once delivery with idempotent producers and transactional writes. This is true exactly-once, not the "effectively exactly-once" of SQS FIFO (which is deduplication within a 5-minute window).

MSK Provisioned vs. Serverless

| Characteristic | MSK Provisioned | MSK Serverless |

|---|---|---|

| Capacity management | You choose broker count, instance type, storage | Automatic |

| Minimum cost | ~$200/month (3 kafka.t3.small brokers) | Pay per use |

| Maximum throughput | Depends on instance type | 200 MB/sec per partition |

| Configuration | Full Kafka config access | Limited (managed defaults) |

| Kafka version | You choose | AWS-managed |

| Tiered storage | Yes | Yes (included) |

| VPC requirement | Yes | Yes |

| Pricing | Instance-hours + storage + data transfer | $0.10/hr cluster + $0.10/GB in + $0.05/GB out |

MSK Serverless removes capacity planning. You create a cluster, create topics, and AWS handles scaling. The trade-off: you lose fine-grained Kafka configuration and pay a premium at high volumes.

For new Kafka workloads on AWS, start with MSK Serverless. Migrate to Provisioned when you need specific Kafka configurations or when Serverless pricing exceeds Provisioned costs at your volume.

MSK Connect

MSK Connect runs Kafka Connect connectors as a managed service. Source connectors pull data into Kafka (from databases, S3, SaaS APIs). Sink connectors push data from Kafka to destinations (S3, OpenSearch, Redshift, DynamoDB).

Popular connectors:

| Connector | Direction | Use Case |

|---|---|---|

| Debezium | Source | Change data capture from MySQL, PostgreSQL, MongoDB |

| S3 Sink | Sink | Archive Kafka topics to S3 in Parquet/Avro/JSON |

| OpenSearch Sink | Sink | Real-time search indexing |

| JDBC Source | Source | Poll database tables for changes |

| HTTP Sink | Sink | Forward events to REST APIs |

MSK Connect handles the operational burden of running Connect workers: scaling, monitoring, fault tolerance. You provide the connector plugin JAR, the configuration, and the capacity parameters. AWS handles the rest.

MSK vs. Kinesis Data Streams

Both are ordered streaming services. The decision depends on your team's Kafka expertise and your integration requirements.

| Dimension | MSK | Kinesis Data Streams |

|---|---|---|

| Protocol | Apache Kafka (open source) | AWS proprietary API |

| Client ecosystem | Massive (any Kafka client) | AWS SDK, KCL, KPL |

| Consumer groups | Native | Must implement with KCL/enhanced fan-out |

| Retention | Up to infinite | Up to 365 days |

| Throughput per partition/shard | Depends on instance (MB/sec) | 1 MB/sec in, 2 MB/sec out per shard |

| Operational complexity | Higher (Kafka expertise needed) | Lower (fully managed) |

| Pricing | Instance-based or serverless | $0.015/shard-hour + $0.014/million PUT units |

| Portability | High (standard Kafka) | Low (AWS-specific) |

Use MSK if your team has Kafka expertise, you need the Kafka Connect ecosystem, you want portability across clouds, or you need infinite retention.

Use Kinesis if you want zero operational overhead, your throughput needs are moderate (hundreds of shards, not thousands of partitions), or you are already deep in the AWS ecosystem with Lambda, Firehose, and analytics services that integrate natively with Kinesis.

Architectural Patterns

Pattern 1: Fan-Out with SNS + SQS

The foundational pattern. One event triggers multiple independent processors.

Producer → SNS Topic → SQS Queue A → Consumer A (order processing)

→ SQS Queue B → Consumer B (email notification)

→ SQS Queue C → Consumer C (analytics)

Each consumer processes at its own pace. If Consumer B is slow, its queue buffers messages. Consumers A and C are unaffected. If Consumer C crashes, its queue accumulates messages until it recovers. No message loss.

This pattern handles millions of messages per day. I use it as the default starting point for any event-driven architecture.

Pattern 2: Event-Driven Microservices with EventBridge

EventBridge acts as the central nervous system across microservice boundaries.

Order Service → EventBridge (default bus) → Rule: "order.created" → SQS → Shipping Service

→ Rule: "order.created" → Lambda → Analytics

→ Rule: "order.cancelled" → SQS → Refund Service

→ Rule: "order.updated" → SNS → Notification Topic

The Order Service publishes events to EventBridge without knowing who consumes them. Adding a new consumer means adding a new rule. No changes to the Order Service. No redeployment.

Pattern 3: Request Buffering with SQS

Protect a slow downstream service from traffic spikes.

API Gateway → SQS Queue → Lambda (controlled concurrency: 10) → Database

Instead of API Gateway calling Lambda directly (which scales to thousands of concurrent invocations and overwhelms the database), the queue buffers requests. Lambda pulls from the queue at a controlled rate via MaximumConcurrency. The database sees steady, predictable load.

Pattern 4: Saga Orchestration with Step Functions + SQS

Long-running transactions across multiple services using the saga pattern.

Step Functions → Lambda: Reserve Inventory

→ Lambda: Process Payment

→ Lambda: Ship Order

(on failure) → Lambda: Compensate Payment

→ Lambda: Release Inventory

Each step succeeds or triggers compensation. Step Functions tracks state. SQS queues between Step Functions and each service provide buffering and retry isolation. If the payment service is temporarily down, the SQS queue holds the request rather than failing the entire saga.

Pattern 5: Change Data Capture with MSK

Stream database changes to multiple downstream systems.

PostgreSQL → Debezium (MSK Connect) → MSK Topic → Consumer Group A: Search Index

→ Consumer Group B: Analytics

→ Consumer Group C: Cache Invalidation

→ S3 Sink Connector: Data Lake

Every database change becomes an event. Consumer groups process independently. The Kafka log provides replay capability. A new consumer can start from the beginning of the log and rebuild its state from scratch.

Pattern 6: Cross-Account Event Distribution

EventBridge event buses in a hub-and-spoke model.

Account A (Orders) → EventBridge Bus → Cross-account rule → Account C (Analytics) EventBridge Bus

Account B (Payments) → EventBridge Bus → Cross-account rule → Account C (Analytics) EventBridge Bus

Each team owns their event bus. The analytics team subscribes to relevant events from multiple source accounts. Resource policies control which accounts can send events to which buses. This pattern scales to dozens of accounts and hundreds of event types.

Choosing the Right Service

Decision framework based on production experience:

| Question | Answer → Service |

|---|---|

| Do I need simple pub/sub fan-out? | SNS |

| Do I need to buffer and decouple a producer from a consumer? | SQS |

| Do I need content-based routing across multiple services? | EventBridge |

| Do I need to preserve message ordering? | SQS FIFO or Kinesis or MSK |

| Do I need event replay from the past? | EventBridge (archive) or MSK (log retention) |

| Do I need to transform events before delivery? | EventBridge (input transformers) or EventBridge Pipes |

| Do I need JMS/AMQP/MQTT protocol compatibility? | Amazon MQ |

| Do I need high-throughput streaming with consumer groups? | MSK |

| Do I need real-time analytics on streaming data? | Kinesis |

Most architectures combine multiple services. A typical production system I build uses EventBridge for cross-service event routing, SNS + SQS for high-volume fan-out within a service boundary, and Step Functions for multi-step orchestration. MSK appears when the data engineering team needs streaming replay. Amazon MQ appears only in migration scenarios.

Cost Comparison at Scale

Monthly costs for processing 100 million events/day (~3 billion/month):

| Service | Configuration | Approximate Monthly Cost |

|---|---|---|

| SQS Standard | Batched send/receive/delete | ~$400 |

| SNS + SQS | 1 topic, 3 queues | ~$600 |

| EventBridge | Custom events | ~$3,000 |

| MSK Serverless | Moderate throughput | ~$2,000-5,000 |

| MSK Provisioned | 3x kafka.m5.large | ~$1,500 |

| Kinesis | 50 shards, on-demand | ~$1,800 |

| Amazon MQ | mq.m5.xlarge active/standby | ~$900 (fixed, regardless of volume) |

SNS + SQS is by far the cheapest serverless option. EventBridge costs more but includes routing, transformation, and archiving. MSK and Kinesis costs depend heavily on throughput and retention settings.

Monitoring and Observability

Every messaging service requires monitoring. Messages in flight, delivery latency, error rates, and queue depth are the vital signs of an event-driven system.

Key Metrics by Service

| Service | Critical Metrics | Alert Threshold |

|---|---|---|

| SQS | ApproximateNumberOfMessagesVisible, ApproximateAgeOfOldestMessage | Visible > 10,000, Age > 300 sec |

| SNS | NumberOfNotificationsFailed, NumberOfNotificationsDelivered | Failed > 0 sustained for 5 min |

| EventBridge | FailedInvocations, ThrottledRules | FailedInvocations > 0, ThrottledRules > 0 |

| MSK | UnderReplicatedPartitions, OfflinePartitionsCount, consumer lag | UnderReplicated > 0, lag growing |

| Amazon MQ | CpuUtilization, CurrentConnectionsCount, StorePercentUsage | CPU > 80%, Store > 80% |

The single most important metric for SQS-based systems is ApproximateAgeOfOldestMessage. When this number climbs, your consumers are falling behind. Fix it before the backlog becomes unrecoverable.

For EventBridge, FailedInvocations is the critical signal. A sustained non-zero value means events are being dropped (or landing in DLQs if configured). Investigate immediately.

For MSK, consumer lag is everything. If a consumer group's lag grows steadily, that consumer cannot keep up with the production rate. Either scale the consumer, optimize processing, or add partitions.

Key Patterns

- Default to SNS + SQS for fan-out. It is the cheapest, simplest, and most battle-tested pattern in AWS.

- Use EventBridge for cross-service event routing. Content-based routing, transformation, and archive/replay justify the higher cost.

- Always configure dead-letter queues. On SQS queues, SNS subscriptions, and EventBridge rules. Messages that fail silently are the hardest bugs to diagnose.

- Enable

ReportBatchItemFailureson Lambda-SQS integrations. A single failed message should not reprocess an entire batch. - Set

MaximumConcurrencyon Lambda-SQS consumers. Protect downstream services from uncontrolled scaling. - Use FIFO queues only when ordering is required. The throughput ceiling and operational constraints are significant.

- Avoid Amazon MQ for new applications. SNS/SQS/EventBridge are cheaper, simpler, and fully serverless.

- Choose MSK over Kinesis when you need Kafka ecosystem compatibility or infinite retention.

- Monitor queue depth and consumer lag. These are the leading indicators of event-driven system health.

- Batch everything. SQS batch APIs, SNS batch publish, Kinesis

PutRecords. Batching reduces cost and increases throughput.

Additional Resources

- Amazon SNS Developer Guide

- Amazon SQS Developer Guide

- Amazon EventBridge User Guide

- Amazon MQ Developer Guide

- Amazon MSK Developer Guide

- Enterprise Integration Patterns by Gregor Hohpe and Bobby Woolf

- Designing Event-Driven Systems by Ben Stopford

Let's Build Something!

I help teams ship cloud infrastructure that actually works at scale. Whether you're modernizing a legacy platform, designing a multi-region architecture from scratch, or figuring out how AI fits into your engineering workflow, I've seen your problem before. Let me help.

Currently taking on select consulting engagements through Vantalect.