I keep running into the same mistake across teams. They treat their build tool and their pipeline orchestrator as one thing. They'll jam deployment logic into CodeBuild buildspec files or Jenkins jobs, and six months later nobody can explain why a release failed or who approved what. The release process turns brittle, opaque, impossible to audit. CodePipeline exists to fix this. It coordinates builds, tests, and deployments into a workflow you can actually observe and reason about, with clearly defined gates, approvals, and rollback boundaries.

This is an architecture reference. I'm assuming you already understand CI/CD concepts and need to design production-grade pipelines on AWS. I'll cover CodePipeline's internals, pipeline structure, source integrations, action types, artifact management, cross-account patterns, execution modes, security, cost, common failure modes, and how CodePipeline fits with CodeBuild and CodeDeploy. The AWS docs handle console walkthroughs and "Hello World" pipelines well enough. What I want to dig into here are the architectural decisions that determine whether your pipelines actually scale.

What CodePipeline Actually Is

CodePipeline is a fully managed CI/CD orchestration service. Orchestration is the key word here. It doesn't compile your code. Doesn't run your tests. Doesn't push your containers. It coordinates the services that do those things: managing artifact flow between stages, enforcing execution order, handling approvals, giving you a single view of the entire release process. Think conductor, not musician.

That's what separates it from Jenkins. In Jenkins, the pipeline runner is also the build executor. CodePipeline's separation of concerns keeps it lightweight and highly available. Your pipeline definition describes what happens and in what order; the actual work gets delegated to action providers like CodeBuild, CodeDeploy, CloudFormation, Lambda, and others.

So how does it stack up against other orchestrators?

| Dimension | CodePipeline | Jenkins Pipeline | GitHub Actions | GitLab CI/CD |

|---|---|---|---|---|

| Managed infrastructure | Fully managed, no servers | Self-hosted or CloudBees managed | Fully managed (GitHub-hosted runners) or self-hosted | Fully managed (SaaS) or self-hosted |

| Event-driven triggers | EventBridge, webhooks, S3, ECR | Webhooks, polling, cron | Push, PR, schedule, workflow_dispatch | Push, merge request, schedule, API |

| Artifact management | S3-based, KMS-encrypted, automatic | Plugin-dependent (Artifactory, S3) | GitHub Artifacts API, 90-day retention | Job artifacts, configurable retention |

| Parallel stages | Parallel actions within a stage | Parallel stages via parallel block | Matrix strategy, concurrent jobs | parallel keyword, DAG pipelines |

| Approval gates | Native manual approval action | Input step or plugin | Environment protection rules | Manual job gates |

| Cross-account deployment | Native IAM role assumption | Credential management plugins | OIDC federation to AWS | OIDC federation or credential variables |

| Native AWS integrations | Deep (CodeBuild, CodeDeploy, CloudFormation, ECS, S3, Lambda, Step Functions) | Via plugins | Via AWS-published actions | Via CI templates and scripts |

| Pricing | Per-pipeline (V1) or per-action-minute (V2) | Free (open source) + infrastructure cost | Per-minute for GitHub-hosted runners | Per-minute for SaaS runners |

| Execution modes | SUPERSEDED, QUEUED, PARALLEL | Concurrent builds configurable | Concurrency controls per workflow | Concurrency via resource groups |

| Pipeline-as-code | CloudFormation, CDK, Terraform | Jenkinsfile (Groovy DSL) | YAML workflow files | .gitlab-ci.yml |

If your deployment targets live in AWS, nothing else gives you tighter integration with IAM, CloudFormation, ECS, and CodeDeploy. That's the differentiator. Multi-cloud shops might get more mileage from GitHub Actions or GitLab, sure. For AWS-centric organizations, though, CodePipeline earns its keep.

Architecture Internals

Five core concepts drive CodePipeline's architecture: pipelines, stages, actions, transitions, and artifacts. A pipeline is the top-level container, holding an ordered sequence of stages. Each stage holds one or more actions, with execution order controlled by runOrder (actions sharing the same runOrder execute in parallel; sequential ordering uses incrementing runOrder values). Transitions connect stages; you can enable or disable them to pause flow. Artifacts carry data between actions.

Every execution gets a unique execution ID. CodePipeline tracks each stage and action independently within that execution, which makes failure diagnostics straightforward. You see exactly where things broke. And why.

When CodePipeline first launched, there was only one pipeline type (now called V1). AWS introduced V2 pipelines in 2023 with substantial improvements. V2 is the default for new pipelines, and I use it exclusively. I'd recommend the same. V1 still works, but treat it as legacy.

| Dimension | V1 Pipeline | V2 Pipeline |

|---|---|---|

| Triggers | Provider-specific change detection (webhooks, EventBridge, polling) | EventBridge rules with push, PR, and tag filters |

| Execution modes | SUPERSEDED only | SUPERSEDED, QUEUED, PARALLEL |

| Pipeline variables | Not supported | Pipeline-level variables and action output variables |

| Manual rollback | Not supported | Supported (re-executes a previous successful execution; actual rollback behavior depends on the deployment provider) |

| Git tags | Not supported as trigger | Supported as trigger filter |

| Branch filtering | Not supported | Glob pattern filtering on branches |

| File path filtering | Not supported | Include/exclude file path patterns |

| Pricing model | $1.00/active pipeline/month | $0.002/action execution minute |

| Max pipelines per region | 1,000 | 1,000 |

| Max stages per pipeline | 50 | 50 |

V2 wins on every dimension that matters in production. QUEUED mode alone justifies migrating; it prevents the race conditions that plague V1 when commits land in quick succession.

Pipeline Structure

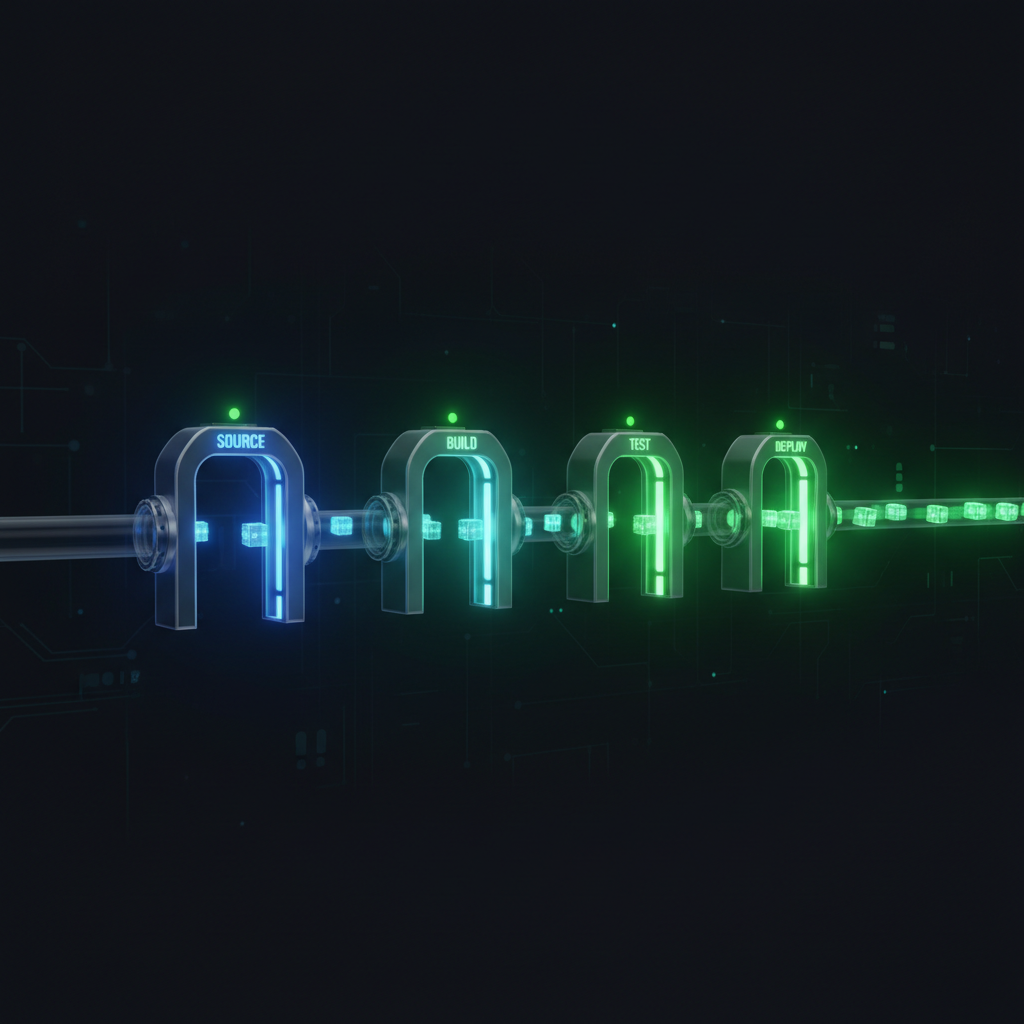

Same skeleton in every well-designed pipeline I've built: Source, Build, Test, Approve, Deploy. Application type and org maturity change the details. The skeleton holds. Stages execute sequentially, meaning Stage 2 waits until every action in Stage 1 succeeds. Within a stage, actions sharing the same run order number execute in parallel. That's how you keep pipeline duration sane.

Sequential stages are a deliberate design choice. Each stage acts as a quality gate. Code compiles before tests run. Tests pass before the artifact gets approval. Approver signs off before staging deployment. Staging succeeds before production. Trying to parallelize these concerns reintroduces the exact risk that pipeline design is supposed to eliminate.

Inside a stage, though, parallelism pays off. Unit tests, integration tests, security scans: if they're independent, run them as parallel actions within a single Test stage. Pipeline duration drops without sacrificing quality gates.

| Stage Type | Purpose | Common Action Providers | Typical Configuration |

|---|---|---|---|

| Source | Fetch code from repository | CodeCommit, GitHub (CodeStar), Bitbucket, S3, ECR | Branch filter, change detection, output artifact |

| Build | Compile code, produce artifacts | CodeBuild, Jenkins | buildspec.yml, compute type, environment image |

| Test | Run test suites, quality checks | CodeBuild, Device Farm, third-party | Test reports, parallel test actions |

| Approval | Human gate before deployment | Manual Approval | SNS notification topic, approval comments, timeout |

| Deploy (Staging) | Deploy to non-production environment | CodeDeploy, CloudFormation, ECS, S3, Elastic Beanstalk | Deployment group, deployment config, stack parameters |

| Deploy (Production) | Deploy to production environment | CodeDeploy, CloudFormation, ECS, S3, Elastic Beanstalk | Blue/green config, rollback triggers, alarms |

I separate Build and Test stages even when both use CodeBuild. Costs nothing extra, and the diagnostic value is real. Something fails? You immediately know whether it's a compilation issue or a test failure. It also lets you add parallel test actions later without touching your build configuration.

Source Integrations

Every pipeline starts at the source stage. Get this wrong and everything downstream suffers. CodePipeline supports several source providers, each with different connection mechanics and trade-offs.

For GitHub, AWS now recommends CodeConnections (formerly CodeStar Connections). It's an app-based connection that replaces the older OAuth-based GitHub integration. No more personal access tokens floating around. You create the connection once and reuse it across pipelines. I switched all my pipelines to CodeConnections when it became available and haven't looked back.

V2 pipelines default to EventBridge for change detection. Push-based, near-instantaneous. V1's polling mechanism? Up to a minute of latency and API calls for no good reason. That alone made V2 worth adopting early.

| Source Provider | Connection Type | Trigger Mechanism | Branch Support | Limitations |

|---|---|---|---|---|

| GitHub | CodeStar Connection (app-based) | EventBridge push events | Branch, tag, PR filters | Requires initial OAuth consent for connection setup |

| CodeCommit | Native AWS integration | EventBridge push events | Branch filter | CodeCommit is no longer available to new customers |

| Bitbucket Cloud | CodeStar Connection | EventBridge push events | Branch, tag, PR filters | Bitbucket Server requires self-managed webhook |

| GitHub Enterprise Server | CodeStar Connection (host-based) | EventBridge push events | Branch, tag, PR filters | Requires VPC connectivity or public endpoint |

| Amazon S3 | Native AWS integration | EventBridge on object upload | N/A (object key based) | Must be versioned bucket; object must be zip or tar.gz |

| Amazon ECR | Native AWS integration | EventBridge on image push | Image tag filter | Triggers on image push only; ignores tag deletion |

S3 and ECR as source providers deserve a mention. S3 works well for pipelines triggered by artifact uploads from external systems. ECR enables container-first pipelines where an image push kicks off the deployment workflow. I've leaned on the ECR source provider heavily in microservices setups. Each service has its own build pipeline producing a container image, and a separate deployment pipeline fires when that image lands in ECR. Clean separation.

Action Types

Actions do the actual work. Every operation in a pipeline is an action: fetching source code, running a build, executing tests, deploying an application, requesting approval, invoking a Lambda function. Each action belongs to a category, has a provider, and specifies input and output artifacts.

| Category | Provider | Description |

|---|---|---|

| Source | CodeCommit | Fetches code from a CodeCommit repository |

| Source | CodeStarSourceConnection | Fetches code from GitHub, GitHub Enterprise, or Bitbucket |

| Source | S3 | Fetches a zip or tar.gz artifact from an S3 bucket |

| Source | ECR | Triggers on a new container image push to ECR |

| Build | CodeBuild | Runs a build project defined by a buildspec.yml |

| Build | Jenkins | Delegates build to a Jenkins server (requires plugin) |

| Test | CodeBuild | Runs test commands in a CodeBuild project |

| Test | Device Farm | Runs mobile application tests on real devices |

| Test | Third-party | BlazeMeter, Ghost Inspector, Micro Focus StormRunner |

| Deploy | CodeDeploy | Deploys to EC2, on-premises, Lambda, or ECS via CodeDeploy |

| Deploy | CloudFormation | Creates, updates, or deletes CloudFormation stacks |

| Deploy | CloudFormation StackSets | Deploys stacks across multiple accounts and regions |

| Deploy | ECS | Deploys a new task definition to an ECS service |

| Deploy | ECS (Blue/Green) | Blue/green deployment to ECS via CodeDeploy |

| Deploy | S3 | Deploys files to an S3 bucket (static websites, artifacts) |

| Deploy | Elastic Beanstalk | Deploys to an Elastic Beanstalk environment |

| Deploy | AppConfig | Deploys configuration via AWS AppConfig |

| Approval | Manual Approval | Pauses pipeline for human approval via SNS notification |

| Invoke | Lambda | Invokes a Lambda function with pipeline context |

| Invoke | Step Functions | Starts a Step Functions state machine execution |

Custom actions exist for integrating third-party tools that lack native support. You define a custom action type with its input/output artifact structure and a job worker that polls CodePipeline. I almost never use them, honestly. Lambda invoke actions cover the same ground with less operational overhead.

Lambda invoke actions are where I spend most of my integration effort. Conditional stage execution (checking whether a deployment is actually needed based on which files changed), Slack notifications with rich formatting, pre-deployment database migration validation, post-deployment smoke tests. The Lambda function receives the pipeline execution context and signals success or failure back to CodePipeline. You get complete control over pipeline flow. No custom action infrastructure to maintain.

Artifact Management

Artifacts carry data between pipeline actions. Every pipeline has an artifact store: an S3 bucket in the pipeline's region. When an action produces output, CodePipeline uploads it to this bucket. Downstream action needs that output? CodePipeline downloads it. S3 as the backing store means artifacts are durable, encrypted, and accessible regardless of compute environment.

Each artifact is stored as a zip file, organized by pipeline name and execution ID. Handy for post-mortem debugging: you can pull an artifact from a failed execution and inspect it directly. S3 lifecycle policies handle retention. CodePipeline will create and manage the artifact bucket for you by default, but I always create my own. I want explicit bucket policies, lifecycle rules, and KMS encryption configuration that I control.

| Configuration | Purpose | Default |

|---|---|---|

| Artifact bucket | S3 bucket for storing pipeline artifacts | Auto-created by CodePipeline (codepipeline-{region}-{account-id}) |

| Encryption key | KMS key for artifact encryption at rest | AWS-managed key (aws/s3) |

| Namespace | Artifact namespace within the bucket | Pipeline name and execution ID |

| Input artifacts | Artifacts consumed by an action | Defined per action (0 to 5 inputs) |

| Output artifacts | Artifacts produced by an action | Defined per action (0 to 5 outputs) |

| Artifact size limit | Maximum size per artifact | No hard limit, but S3 multipart applies above 5 GB |

Artifact encryption happens transparently via KMS. The default AWS-managed key works fine for single-account pipelines. Cross-account pipelines need a customer-managed KMS key (CMK) so you can grant decrypt permissions to roles in other accounts through the key policy. I've lost more hours debugging this specific issue than I care to admit. More on this in the Security Architecture section.

Multi-region pipelines add a wrinkle. CodePipeline automatically creates artifact stores in each region where actions execute and replicates artifacts between them. Transparent to you, but you'll pay for it in latency and S3 cross-region data transfer charges.

Cross-Account and Cross-Region Pipelines

Any serious AWS environment uses multiple accounts for environment isolation. Typical layout: a shared-services account for CI/CD tooling, separate accounts for development, staging, and production. CodePipeline handles cross-account deployments natively. The IAM configuration, though? It will test your patience.

Here's how it works. The pipeline runs in the CI/CD account. When a deploy action targets a different account, the pipeline's service role assumes a cross-account role in that target account. That role carries the permissions needed for the deployment (updating an ECS service, executing a CodeDeploy deployment, updating a CloudFormation stack). Two trust relationships must be correct: the cross-account role must trust the pipeline's service role, and the pipeline's service role must have sts:AssumeRole permission for that cross-account role. Get either one wrong and you get an AccessDenied error with minimal context.

The artifact bucket is the other piece that trips people up. Cross-account roles need to download deployment artifacts from the CI/CD account's artifact bucket. You need both an S3 bucket policy granting access to those roles and a KMS key policy granting them decrypt permissions. Miss either one and the deploy action fails. Silently.

| Role | Account | Trust Relationship | Key Permissions |

|---|---|---|---|

| Pipeline Service Role | CI/CD account | Trusts CodePipeline service (codepipeline.amazonaws.com) | S3 (artifact bucket), CodeBuild, CodeDeploy, KMS, sts:AssumeRole on cross-account roles |

| Cross-Account Deploy Role | Staging account | Trusts Pipeline Service Role in CI/CD account | CodeDeploy, ECS, CloudFormation, S3 (artifact bucket read), KMS decrypt |

| Cross-Account Deploy Role | Production account | Trusts Pipeline Service Role in CI/CD account | CodeDeploy, ECS, CloudFormation, S3 (artifact bucket read), KMS decrypt |

| CodeBuild Service Role | CI/CD account | Trusts CodeBuild service (codebuild.amazonaws.com) | S3 (artifact bucket), ECR, CloudWatch Logs, KMS, any build-time resources |

Cross-region adds yet another layer. Actions executing in a different region cause CodePipeline to create an artifact bucket in that region and replicate artifacts. The KMS key must exist in each region, or you need separate regional keys with cross-region access configured. My strong preference: keep pipelines single-region whenever possible. Use cross-account for environment isolation. Cross-region pipelines are solvable, but the operational complexity rarely justifies itself.

Pipeline Triggers and Execution Modes

V2 pipelines brought a trigger system that actually works for real-world scenarios. You can filter on branches, tags, file paths, and pull request events. In monorepo architectures where a single repository holds multiple services, these filters are table stakes. Each service's pipeline triggers only when its files change. Without this, every commit fires every pipeline. I lived with that pain on V1 for way too long.

Execution modes don't get the attention they deserve. They control what happens when a new execution triggers while a previous one is still running. This choice directly affects deployment reliability.

| Execution Mode | Behavior | Best Use Case | Caveats |

|---|---|---|---|

| SUPERSEDED | New execution cancels the in-progress execution | Development branches where only the latest commit matters | In-flight deployments may be interrupted mid-stage; can leave resources in inconsistent state |

| QUEUED | New execution waits until the current execution completes | Production pipelines where every commit must be deployed in order | Queue can grow during high-commit periods; execution order is strictly preserved |

| PARALLEL | Multiple executions run concurrently and independently | Pipelines with no shared mutable state (e.g., per-PR preview environments) | No ordering guarantees; concurrent deployments to the same target can conflict |

QUEUED mode for production. Always. I don't compromise on this. SUPERSEDED mode can kill a deployment mid-execution and leave your infrastructure half-updated. I've seen this happen on a Friday afternoon with a CloudFormation stack stuck in UPDATEINPROGRESS. Not fun. PARALLEL mode works well for ephemeral environments (per-branch, per-PR) where each execution targets something independent.

V2 trigger filters give you precise control over what kicks off a pipeline.

| Filter Type | Syntax | Example |

|---|---|---|

| Branch include | Glob pattern on branch name | main, release/*, feature/** |

| Branch exclude | Glob pattern to exclude branches | dependabot/*, test-* |

| File path include | Glob pattern on changed file paths | services/api/**, infrastructure/**/*.tf |

| File path exclude | Glob pattern to exclude file paths | docs/**, *.md, **/*.test.js |

| Tag include | Glob pattern on Git tags | v*, release-*, v[0-9]*.[0-9]*.[0-9]* |

File path filters changed how I structure monorepo pipelines entirely. Before them, every commit triggered every pipeline. With path filters, each pipeline scopes to its own service directory. I've run this pattern across organizations with 10 to 20 microservices in a single repository. Each service gets its own pipeline, triggered only by changes under its directory. Dramatic noise reduction.

Security Architecture

IAM roles, S3 bucket policies, KMS key policies. Those three form CodePipeline's security model. Misconfiguration is the single most common source of pipeline failures I debug. Also the most frustrating, because the error messages rarely tell you which permission is missing.

The pipeline service role is what CodePipeline assumes to orchestrate everything. It needs permissions for every service the pipeline touches: reading from the source provider, starting CodeBuild projects, creating CodeDeploy deployments, managing artifacts in S3, encrypting and decrypting with KMS, assuming cross-account roles. Least privilege matters here. I've walked into organizations where the pipeline service role had AdministratorAccess because someone got tired of fighting IAM errors. That's a serious security exposure.

| Relationship | Required Permissions | Key Policy Considerations |

|---|---|---|

| Pipeline to S3 (artifacts) | s3:GetObject, s3:PutObject, s3:GetBucketVersioning, s3:GetBucketLocation | Bucket policy must allow pipeline role; cross-account roles need explicit bucket policy grants |

| Pipeline to CodeBuild | codebuild:BatchGetBuilds, codebuild:StartBuild, codebuild:StopBuild | CodeBuild service role is separate from pipeline role; CodeBuild role needs artifact bucket access |

| Pipeline to CodeDeploy | codedeploy:CreateDeployment, codedeploy:GetDeployment, codedeploy:RegisterApplicationRevision | CodeDeploy service role needs EC2/ECS permissions depending on deployment target |

| Pipeline to CloudFormation | cloudformation:CreateStack, cloudformation:UpdateStack, cloudformation:DescribeStacks, iam:PassRole | The pipeline passes the CloudFormation execution role; that role needs permissions for all resources in the template |

| Pipeline to KMS | kms:Decrypt, kms:DescribeKey, kms:GenerateDataKey*, kms:ReEncrypt* | Key policy must grant pipeline role and all cross-account roles encrypt/decrypt access |

| Pipeline to cross-account roles | sts:AssumeRole on each cross-account role ARN | Cross-account roles must have trust policies that trust the pipeline service role ARN specifically (scope trust to the role, never to the entire CI/CD account) |

Here's the subtlety that catches people. When CodePipeline passes artifacts to CodeBuild, the CodeBuild service role is the one reading from and writing to the artifact bucket. Not the pipeline role. So the CodeBuild service role needs its own S3 and KMS permissions for that bucket. Same story for CodeDeploy. Every service in the chain needs independent permissions to the artifact bucket. Miss one link and you get a cryptic "Access Denied" with no indication of which role or which permission failed. I now go through a full permission chain checklist before deploying any cross-account pipeline. Every time.

On cross-account trust: trust the specific pipeline service role ARN. Never the entire CI/CD account. Trusting the account means any role in that account can assume your deploy role. Defeats the entire purpose of environment isolation.

Cost Model

V1 and V2 pricing models differ substantially. V1 charges a flat monthly fee per pipeline. V2 charges per action execution minute. Which costs less? Depends on how many pipelines you have and how often they run.

V1: $1.00 per active pipeline per month. One free pipeline in the free tier. A pipeline counts as "active" if it's existed for more than 30 days and had at least one code change run through it during the month.

V2: $0.002 per action execution minute. So if your pipeline has 6 actions and each runs for 2 minutes, one execution costs 12 action minutes, or $0.024. Manual approval wait time and disabled transitions don't count.

| Scenario | Pipelines | Executions/Month | Actions/Pipeline | Avg Action Duration | V1 Monthly Cost | V2 Monthly Cost |

|---|---|---|---|---|---|---|

| Solo developer | 2 | 30 | 4 | 2 min | $2.00 | $0.48 |

| Small team | 10 | 200 | 5 | 3 min | $10.00 | $6.00 |

| Mid-size organization | 50 | 1,000 | 6 | 3 min | $50.00 | $36.00 |

| Large org, low frequency | 200 | 2,000 | 6 | 3 min | $200.00 | $72.00 |

| Large org, high frequency | 200 | 10,000 | 8 | 4 min | $200.00 | $640.00 |

Look at the crossover. Organizations with many pipelines that execute infrequently (infrastructure pipelines, stable services that rarely change) save substantially on V2. High-frequency pipelines with trunk-based development can make V1's flat rate cheaper. But V2's features (execution modes, triggers, variables) almost always justify paying more. The only scenario where I'd still pick V1 is hundreds of monthly executions with many long-running actions. Even then, V2's operational benefits usually win.

One more thing on cost: CodePipeline charges are almost always a rounding error next to your CodeBuild compute and CodeDeploy infrastructure costs. Optimize those first.

Common Failure Modes

Pipelines break. Every one of these failure modes has bitten me in production. The mitigations come from actually fixing these problems at 2 AM, not from reading docs.

| Failure Mode | Symptom | Root Cause | Mitigation |

|---|---|---|---|

| Source webhook not firing | Pipeline does not trigger on commit | CodeStar Connection deauthorized or EventBridge rule deleted | Monitor connection health with CloudWatch; create alarms on EventBridge rule invocation count dropping to zero |

| Artifact too large | Action fails with S3 upload error | Build output exceeds practical S3 single-object upload limits or action timeout | Reduce artifact size by excluding build intermediates; use .codebuild/artifacts filtering in buildspec |

| Cross-account assume role denied | Deploy action fails with AccessDenied | Trust policy does not trust the pipeline role, or the pipeline role lacks sts:AssumeRole permission | Verify trust policy trusts the exact pipeline role ARN; verify pipeline role has AssumeRole permission for the specific cross-account role ARN |

| Action timeout | Action shows "Timed out" status | Action exceeds its configured or default timeout (default varies by action type, typically 60 minutes) | Increase action timeout in pipeline configuration; investigate why the underlying operation is slow |

| Stage transition disabled and forgotten | Pipeline appears stuck | An engineer disabled a transition for debugging and never re-enabled it | Implement CloudWatch alarms for pipelines in "Stopped" state beyond a threshold; review transitions in pipeline dashboard regularly |

| Manual approval expired | Approval action fails | No one approved within the timeout period (default 7 days) | Configure SNS notifications for approval requests; set appropriate timeout windows; establish on-call approval processes |

| S3 artifact bucket policy mismatch | Action fails with "Access Denied" on S3 | Bucket policy does not grant access to the correct role (often after pipeline role ARN changes) | Use CloudFormation to manage bucket policies alongside pipeline definitions; test cross-account access after any IAM changes |

| KMS key access denied | Action fails with KMS decrypt error | Cross-account role lacks KMS decrypt permission, or KMS key policy does not list the role | Include all cross-account roles in the KMS key policy; use a single CMK for all pipeline artifacts in an account |

| Pipeline execution superseded unexpectedly | Deployment does not complete; newer execution runs instead | Pipeline uses SUPERSEDED mode and a new commit arrived during execution | Switch production pipelines to QUEUED mode; use SUPERSEDED only for development pipelines where latest-only semantics are acceptable |

| Insufficient CodeBuild concurrent builds | Build action queued for extended periods | Account has reached the CodeBuild concurrent build limit (default varies by compute type) | Request a service quota increase for CodeBuild concurrent builds; stagger pipeline executions if possible |

Monitoring is the single best investment across all these failure modes. CloudWatch alarms on pipeline execution failures, stage durations that exceed thresholds, pipeline state changes. CodePipeline publishes detailed events to EventBridge. Wire those into your alerting for every production pipeline. Teams that do this catch problems in minutes. Teams that don't catch them when a customer reports an outage.

Integration with CodeBuild and CodeDeploy

CodePipeline as orchestrator. CodeBuild for builds and tests. CodeDeploy for deployments. AWS designed these three services as a unit, and they work best together. Understanding the integration points matters; the boundaries between them are where most configuration mistakes happen.

CodeBuild actions consume source artifacts and produce build artifacts. Your buildspec.yml defines what CodeBuild does; CodePipeline controls when it runs and what artifacts it receives. At action start, CodePipeline downloads the input artifact from S3 and hands it to CodeBuild. When CodeBuild finishes, the output artifact (defined in the buildspec's artifacts section) gets uploaded back to S3.

CodeDeploy actions consume those build artifacts and run deployments. The artifact must contain an appspec.yml (or appspec.yaml) telling CodeDeploy how to deploy. For ECS deployments, it also includes the task definition and container image URI. CodePipeline moves the artifact from CodeBuild to CodeDeploy; CodeDeploy reads the appspec from it.

Here's what you need to internalize: CodeBuild and CodeDeploy never talk to each other directly. All data flows through the artifact store in S3. Your buildspec must produce an output artifact containing everything CodeDeploy needs: the appspec, the deployment scripts, the application bundle. Something missing from that artifact? CodeDeploy fails. I've debugged this exact issue more times than I can count, usually because someone added a new deployment hook and forgot to include the script in the buildspec's artifacts section.

For a deeper examination of these services, see AWS CodeBuild: An Architecture Deep-Dive and AWS CodeDeploy: An Architecture Deep-Dive.

Key Architectural Recommendations

These recommendations come from operating CodePipeline in production across organizations ranging from startups to large enterprises. They reflect what I've learned works and what I've seen go wrong.

- Always use V2 pipelines. V1 is legacy. V2 gives you execution modes, trigger filters, pipeline variables, and manual rollback. Cost model is favorable for most workloads, and the operational benefits are substantial.

- Use QUEUED execution mode for production pipelines. SUPERSEDED mode can cancel in-progress deployments and leave infrastructure half-updated. QUEUED ensures every execution completes in order. That's the correct semantic for production.

- Use PARALLEL execution mode for development and preview environments. Each execution targets an independent ephemeral environment (per-branch or per-PR). PARALLEL gives you the fastest feedback loop.

- Implement cross-account pipelines for environment isolation. Run the pipeline in a dedicated CI/CD account, assuming roles in target accounts for deployment. This creates real security boundaries. A compromised dev environment stays contained.

- Use Lambda invoke actions for conditional logic. Skip a stage based on changed files. Send rich Slack notifications. Validate a database migration before deployment. Lambda gives you arbitrary logic inside the pipeline flow without the overhead of custom action types.

- Gate production deployments with manual approval. Automated staging deployments work well. Automated production deployments require a level of testing and monitoring maturity that most organizations haven't reached. A manual approval provides a human checkpoint to verify staging behavior.

- One pipeline per service in microservices architectures. Monolithic pipelines that build and deploy multiple services create coupling, slow feedback, and blast radius problems. Give each service its own pipeline, triggered by file path filters in a monorepo or repository-level triggers in a multi-repo setup.

- Use pipeline variables for environment-specific configuration. V2 variables let you parameterize your pipeline without duplicating definitions for each environment. Pass environment names, account IDs, and region values as variables. Don't hardcode them.